Machine Learning Research

Cutting the Cost of Pretrained Models: FrugalGPT, a method to cut AI costs and maintain quality

Research aims to help users select large language models that minimize expenses while maintaining quality.

Machine Learning Research

Research aims to help users select large language models that minimize expenses while maintaining quality.

Machine Learning Research

Robots equipped with large language models are asking their human overseers for help.

Machine Learning Research

The technique known as reinforcement learning from human feedback fine-tunes large language models to be helpful and avoid generating harmful responses such as suggesting illegal or dangerous activities. An alternative method streamlines this approach and achieves better results.

Machine Learning Research

Machine learning models typically learn language by training on tasks like predicting the next word in a given text. Researchers trained a language model in a less focused, more human-like way.

Machine Learning Research

Large language models are not good at math. Researchers devised a way to make them better. Tiedong Liu and Bryan Kian Hsiang Low at the National University of Singapore proposed a method to fine-tune large language models for arithmetic tasks.

Tech & Society

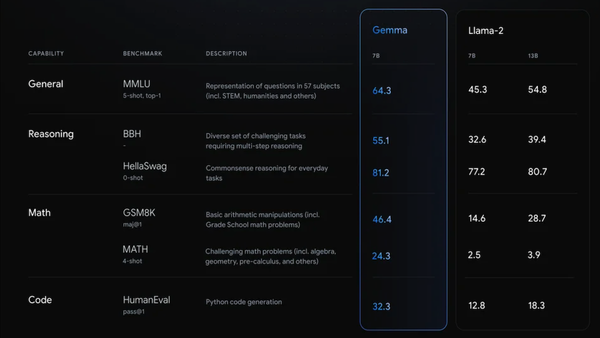

Google asserted its open source bona fides with new models. Google released weights for Gemma-7B, an 8.5 billion-parameter large language model intended to run GPUs, and Gemma-2B, a 2.5 billion-parameter version intended for deployment on CPUs and edge devices.

Machine Learning Research

Reinforcement learning from human feedback (RLHF) is widely used to fine-tune pretrained models to deliver outputs that align with human preferences. New work aligns pretrained models without the cumbersome step of reinforcement learning.

Machine Learning Research

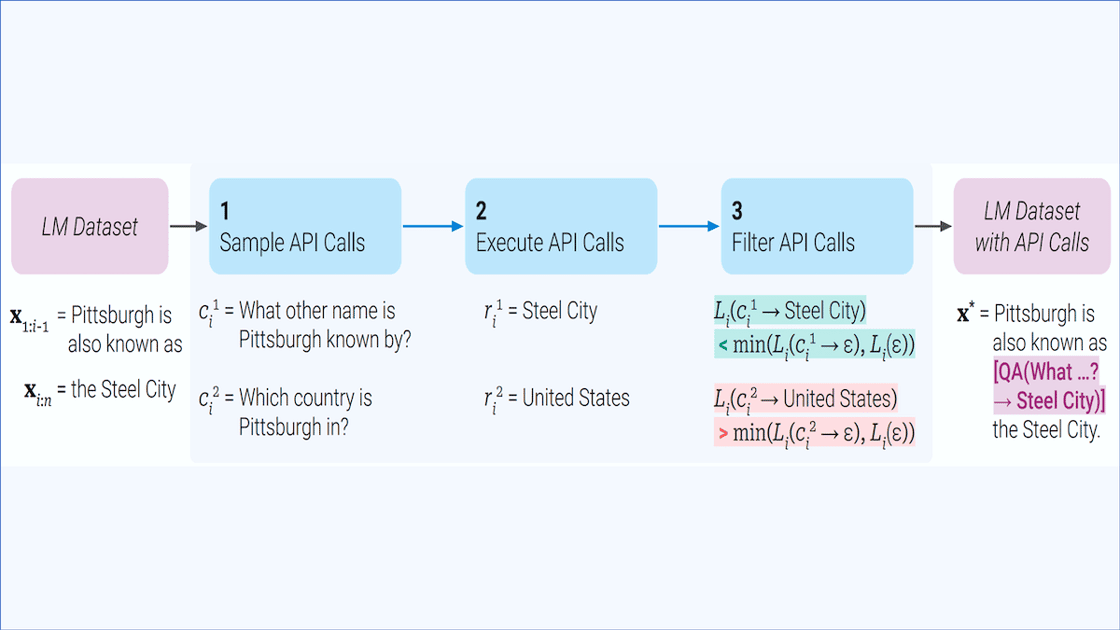

The combination of language models that are equipped for retrieval augmented generation can retrieve text from a database to improve their output. Further work extends this capability to retrieve information from any application that comes with an API.

Machine Learning Research

Pruning weights from a neural network makes it smaller and faster, but it can take a lot of computation to choose weights that can be removed without degrading the network’s performance.

Machine Learning Research

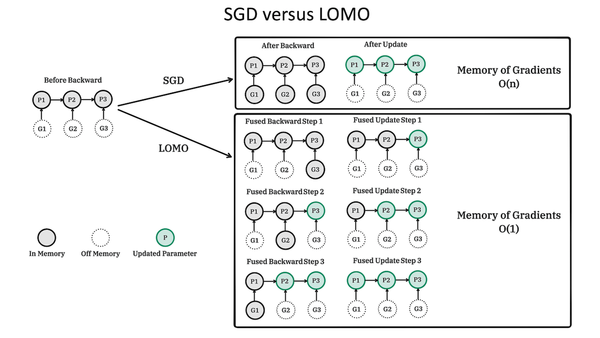

Researchers devised a way to reduce memory requirements when fine-tuning large language models. Kai Lv and colleagues at Fudan University proposed low memory optimization (LOMO), a modification of stochastic gradient descent that stores less data than other optimizers during fine-tuning.

Machine Learning Research

The longer text-to-image models train, the better their output — but the training is costly. Researchers built a system that produced superior images after far less training.

Machine Learning Research

Most people understand that others’ mental states can differ from their own. For instance, if your friend leaves a smartphone on a table and you privately put it in your pocket, you understand that your friend continues to believe it was on the table.