US government to vet new models: ChatGPT gets a model refresh

Anthropic’s latest products for banking and finance. Drug manufacturers get AI gains they didn’t expect. US military augments Anthropic with new partners. Google DeepMind’s UK unionization vote.

In today’s edition of Data Points, you’ll learn more about:

- Anthropic’s latest products for banking and finance

- Drug manufacturers get AI gains they didn’t expect

- US military augments Anthropic with new partners

- Google DeepMind’s UK unionization vote

But first:

US signals it will more closely regulate AI industry

The White House is preparing an executive order to create a vetting system for new artificial intelligence models, modeled on FDA drug approval processes, according to National Economic Council Director Kevin Hassett. The move follows Anthropic’s disclosure that its Mythos model can identify network vulnerabilities and poses potential cybersecurity risks; the Trump administration is currently testing the model across federal agencies and large tech firms before wider release. Hassett said the vetting process would likely extend to all AI companies, not just Mythos, though it remains unclear whether the order would mandate testing or operate as a voluntary framework. The announcement represents a potential shift toward regulation for an administration that has generally favored hands-off AI policy. Separately, the Commerce Department expanded a voluntary testing program on Tuesday, with Google, Microsoft, and xAI now providing the government early access to assess their models’ security and capabilities. (Bloomberg)

ChatGPT model reduces hallucinations with warm, concise answers

OpenAI rolled out GPT-5.5 Instant as the default model for ChatGPT, replacing GPT-5.3 Instant for all users. The update targets factuality and clarity: internal evaluations show the new model produced 52.5% fewer hallucinated claims on high-stakes prompts in medicine, law, and finance, and cut inaccurate statements by 37.3% on conversations users had flagged for errors. Responses are tighter and more concise while retaining conversational warmth, and the model improved at analyzing images, handling STEM questions, and deciding when to use web search. OpenAI also introduced memory sources—a transparency feature showing which past chats, files, or Gmail context shaped a personalized response, with controls to delete or correct outdated information. For paid users, GPT-5.3 Instant remains available for three months; enhanced personalization features are rolling out to Plus and Pro users now, with Free and other tiers coming in the following weeks. (OpenAI)

Claude for finance: new agentic tools for banks and traders

Anthropic released ten agent templates that handle labor-intensive financial work—building pitchbooks, screening KYC documents, reconciling ledgers, and closing the books each month. The templates ship as plugins in Claude Cowork and Claude Code, or as cookbooks for Claude Managed Agents, letting firms deploy them in days instead of building from scratch. Each template bundles task-specific instructions, governed data connectors, and subagents that handle discrete subtasks like comparables selection. Claude now works across Excel, PowerPoint, Word, and Outlook through add-ins that carry context between apps automatically—an analyst who starts a model in Excel doesn’t need to re-explain it when drafting a deck in PowerPoint. Anthropic also added eight new data connectors, including Dun & Bradstreet, Guidepoint, and Verisk, plus a Moody’s MCP app that embeds proprietary credit ratings on 600 million companies directly inside Claude. The agents run on Claude Opus 4.7, which leads Vals AI’s Finance Agent benchmark at 64.37 percent. (Anthropic)

Big pharma finds AI valuable despite struggles with drug discovery

Major pharmaceutical companies including Eli Lilly, Roche, GSK, and AstraZeneca are pouring billions into AI partnerships with tech firms, betting that machine learning will accelerate drug development. Yet years of promises have failed to materialize into breakthrough discoveries—clinical trial success rates haven’t improved, and companies still lack sufficient training data and face high computational costs. The real wins so far come from back-office work and manufacturing optimization: Eli Lilly used AI to simulate and improve tirzepatide production, achieving what executives called “mind blowing” output gains without disclosing specifics. A few programs show promise—Recursion Pharmaceuticals cut experimental cancer drug design from four years to 18 months using AI, and Takeda’s AI-discovered psoriasis pill is heading for FDA approval—but most pharma AI value remains years away from proving itself in actual patient outcomes. RBC Capital Markets estimates the technology could save the U.S. pharma industry $90 billion over five years, though most of that comes from efficiency, not innovation. (Wall Street Journal)

US military expands partnerships with multiple big tech companies

The US Department of Defense signed agreements with Microsoft, Amazon, Nvidia, and Reflection AI to deploy their systems on classified operations, joining OpenAI, xAI, and Google as approved vendors. The move follows the Pentagon’s cancellation of a $200 million contract with Anthropic over disagreements about restricting the company’s technology from domestic surveillance and autonomous weapons development—restrictions CEO Darius Amodei sought but the Trump administration rejected as overly limiting. The Pentagon framed the expansion as preventing vendor lock-in and building an “AI-first fighting force,” with approved systems handling the most sensitive classified materials. Yet according to reporting from Axios, White House sources suggest the administration may be looking for ways to bring Anthropic back into the fold, even as the company pursues litigation for lost revenues. Anthropic’s Claude coding model reportedly remains in use across US government security agencies despite the public rift, and its specialized Mythos model is under assessment by 40 organizations worldwide for cyber defense applications. (AI News)

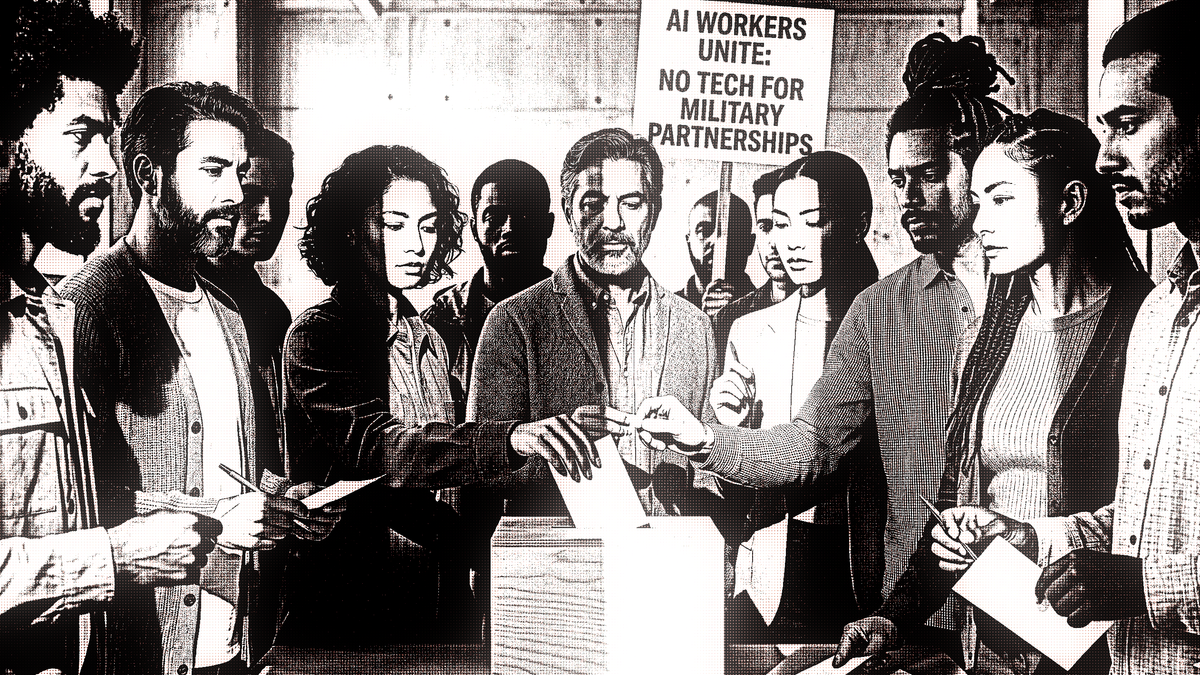

Google employees protest military uses of AI, vote for union

Employees at Google DeepMind’s London office voted to unionize, seeking recognition from the Communication Workers Union and Unite the Union specifically to block the lab from providing technology to US and Israeli militaries. The push accelerated after Alphabet removed its public pledge against weapons development and surveillance from its ethics guidelines in February 2025, a move that prompted one anonymous DeepMind employee to tell WIRED the company is moving toward “further militarization of the AI models we’re building here.” The unionization effort comes as Google signed a Pentagon deal allowing the US military to use its AI for “any lawful government purpose”—language the employees consider dangerously vague—and follows similar concerns across the industry: DeepMind and OpenAI staff signed a letter supporting Anthropic after the Department of Defense tried to designate it a supply chain risk for refusing autonomous weapons use. If Google refuses to recognize the unions, workers say they’ll escalate to a UK arbitration committee to compel recognition. (Wired)

Want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

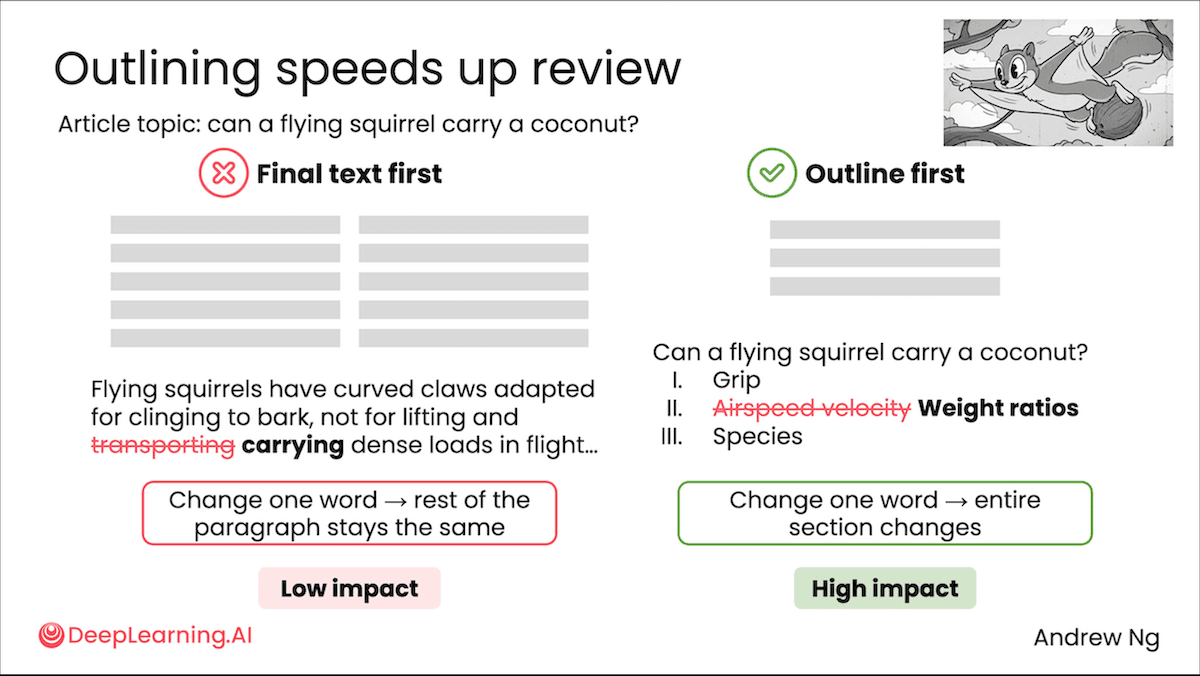

Last week, Andrew talked about the evolution of AI prompting since 2022 and introduced his new course, "AI Prompting for Everyone," which taught skills for effectively using AI tools like ChatGPT and others.

“I’m teaching a new course, AI Prompting for Everyone, to help everyone become an AI power user — whatever their current skill level — and prompt LLMs to take advantage of their latest capabilities.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

- GPT-5.5 Outperforms, Hallucinates as OpenAI’s latest model tops leaderboards for coding, visual puzzles, and overall intelligence.

- Tech giants including Alphabet, Amazon, Meta, and Microsoft acknowledge AI’s strain on environment, challenging their CO2 pledges.

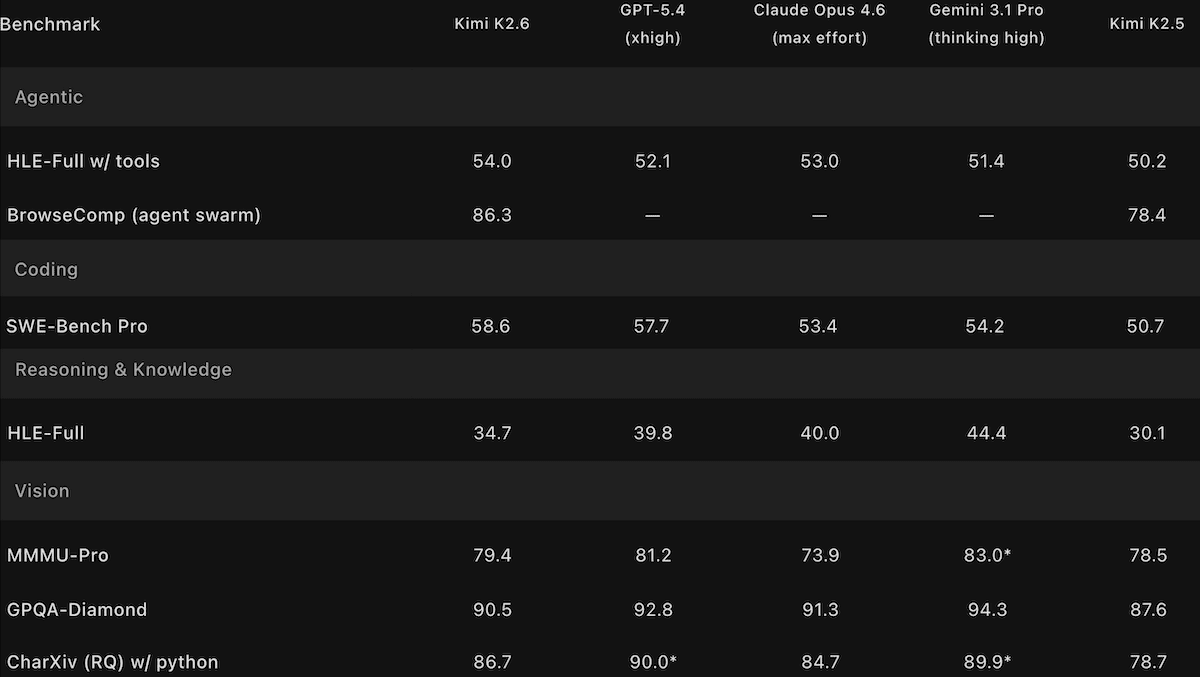

- Kimi K2.6 Challenges Open-Weights Champs by matching open Qwen3.6 Max and DeepSeek V4, though it falls just behind top closed models.

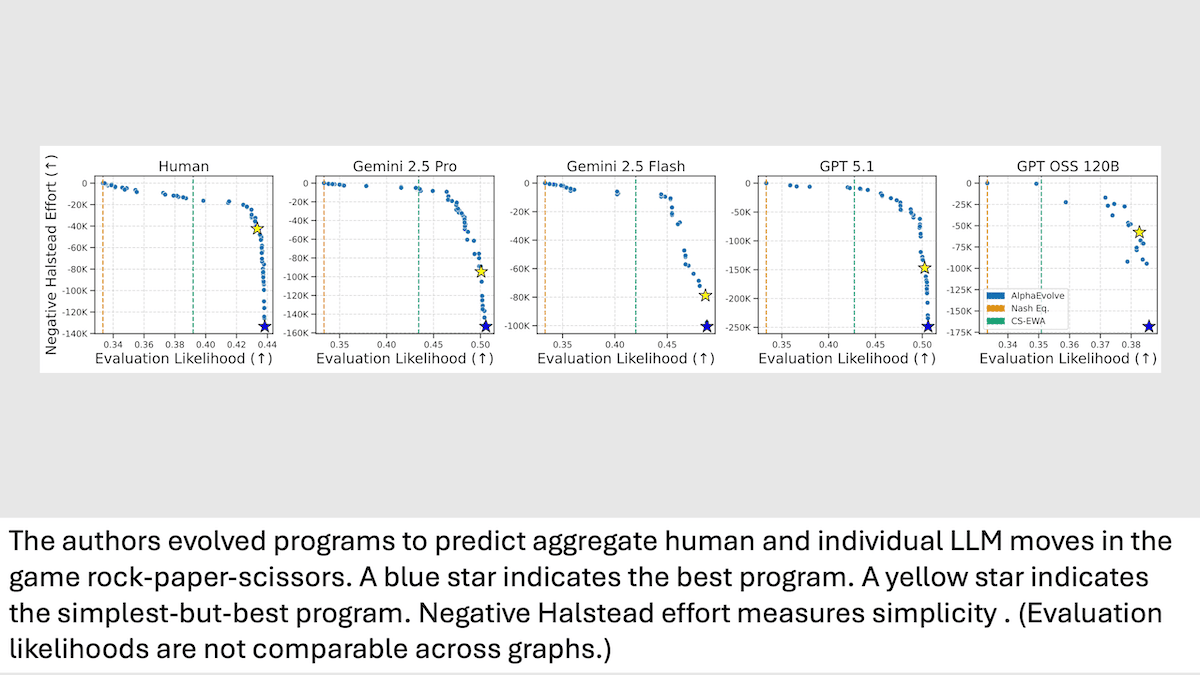

- Researchers at UT-Austin and Google model human decision-making in Rock-Paper-Scissors to compare strategic thinking in LLMs versus humans.

A special offer for our community

DeepLearning.AI recently launched the first-ever subscription plan for our entire course catalog! As a Pro Member, you’ll immediately enjoy access to:

- Over 150 AI courses and specializations from Andrew Ng and industry experts

- Labs and quizzes to test your knowledge

- Projects to share with employers

- Certificates to testify to your new skills

- A community to help you advance at the speed of AI

Enroll now to lock in a year of full access for $25 per month paid upfront, or opt for month-to-month payments at just $30 per month. Both payment options begin with a one-week free trial. Explore Pro’s benefits and start building today!

Data Points is produced by human editors with AI assistance.