China shuts down Manus acquisition: All the details from Microsoft’s new deal with OpenAI

Nvidia’s laptop-sized omnimodal model. Grok cuts prices, boosts context. OpenAI’s AGI principles. IBM’s latest suite of open Granite models.

In today’s edition of Data Points, you’ll learn more about:

- Nvidia’s laptop-sized omnimodal model

- Grok cuts prices, boosts context

- OpenAI’s AGI principles

- IBM’s latest suite of open Granite models

But first:

Chinese regulators block Meta’s acquisition of Manus

China’s National Development and Reform Commission ruled against Meta’s acquisition of Manus, a Singapore-based AI startup with Chinese roots, and required all parties to withdraw from the deal. The decision came after Chinese authorities launched a security review in January, citing concerns about technology exports, data transfers, and cross-border acquisitions. Meta had announced the deal in December as a rare move by a major U.S. tech company to acquire an AI firm with strong China ties; the startup’s general-purpose AI agents can autonomously perform complex multi-step tasks. Meta had promised to eliminate Chinese ownership interests and discontinue Manus operations in China, but those commitments apparently didn’t satisfy regulators. The company said the deal complied with applicable law and expected “an appropriate resolution,” though the block appears final. (Associated Press)

Microsoft and OpenAI come to terms, detail new covenant

Microsoft and OpenAI restructured their partnership this week, letting OpenAI sell its models to AWS and other cloud providers—a move that would have triggered legal threats just months ago. The shift came after Amazon’s $50 billion investment in OpenAI, which reportedly had Microsoft weighing its options. Under the new terms, Microsoft collects 20 percent of OpenAI’s revenue across all platforms, including rival clouds, subject to a total cap after which payments stop -- but stops paying revenue share back. It keeps a non-exclusive license through 2032 and remains OpenAI’s primary partner. The real concession: Microsoft lost the AGI clause that would have guaranteed continued access to OpenAI’s most advanced models once artificial general intelligence arrived. That clause had consumed months of tense negotiations during OpenAI’s contentious shift to a for-profit structure and its near-acquisition of Windsurf. The deal signals Microsoft’s quiet hedge against over-reliance on a partner whose strategic direction has grown unpredictable—it’s already testing Anthropic’s Claude and exploring Google’s Gemini for certain products. (The Verge)

Nvidia’s omnimodal model runs on local machines

Nvidia unveiled Nemotron 3 Nano Omni, an open-weights multimodal model that consolidates vision, audio, and language processing into a single system designed for AI agents. Today’s systems typically juggle separate models for each modality, losing time and context as data passes between them. The 30-billion-parameter mixture-of-experts model achieves up to nine times higher throughput than competing open multimodal models while maintaining interactive responsiveness, addressing a real bottleneck in agent systems that need to process screen recordings, call audio, PDFs, and charts simultaneously. The model tops six leaderboards for document intelligence and video/audio understanding. Nemotron 3 Nano Omni launched April 28 via Hugging Face, OpenRouter, and 25+ partner platforms, with early adoption from Palantir, H Company, Foxconn, and others already building production agents on top of it. (Nvidia)

xAI updates Grok, improving context windows and lowering prices

xAI shipped Grok 4.3 at $1.25 per million input tokens—approximately 37.5 percent percent cheaper than Grok 4.2 (which launched at $2 per million input tokens). The model runs reasoning permanently rather than as an optional mode, and handles a one-million-token context window. It can autonomously generate Excel spreadsheets, PDFs, and PowerPoint decks, plus access web search and code execution. On benchmarks, Grok 4.3 still lags OpenAI and Anthropic’s latest models, though it improves over its predecessor. xAI also released Custom Voices, a voice cloning tool that builds high-fidelity replicas from two minutes of audio. The voice agent API costs $3 per hour and is available only in the U.S. outside Illinois. The launches arrive as all ten of xAI’s original co-founders have departed. (VentureBeat)

OpenAI outlines its pursuit of artificial general intelligence (without defining AGI)

OpenAI published a statement of operating principles meant to guide its work toward artificial general intelligence: democratization (resisting power consolidation), empowerment (enabling users to accomplish valuable tasks), universal prosperity (ensuring broad economic benefit from AI), resilience (collaborating on safety and societal risks), and adaptability (updating positions as technology evolves). The company frames these principles as responses to existential questions about AI’s future—whether power concentrates among a few companies or distributes widely, and whether the technology amplifies human potential or creates new harms. Notably, OpenAI acknowledges tensions between its stated goals: it explicitly flags that future trade-offs may force it to prioritize resilience over empowerment. The statement also reveals reasoning behind unusual business decisions—massive compute spending despite modest revenue, vertical integration, and global datacenter expansion—which OpenAI frames as expressions of its belief in universal prosperity rather than standard growth tactics. (OpenAI)

IBM releases a mix of open models with data provenance attached

IBM released Granite 4.1, a collection spanning language, vision, speech, embedding, and safety models—its broadest model release to date. The language models come in 3B, 8B, and 30B parameter sizes, and IBM claims the new 8B instruct model matches or beats its previous 32B mixture-of-experts model, a meaningful efficiency jump for teams watching inference costs. All three are trained on roughly 15 trillion tokens with a multi-stage reinforcement learning pipeline that separately targets instruction following, conversation quality, and mathematical reasoning—an attempt to avoid the capability trade-offs that come with single-phase training. The speech line includes a non-autoregressive variant, Granite Speech 4.1 2B NAR, which generates entire sequences at once rather than one token at a time, trading some transcription features for substantially higher GPU throughput. Granite Guardian 4.1, built on top of the 8B language model, expands the prior guardrail model’s risk taxonomy and is designed to run alongside any LLM—open or proprietary—as an inline safety layer. All models are released under Apache 2.0. (IBM)

Want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

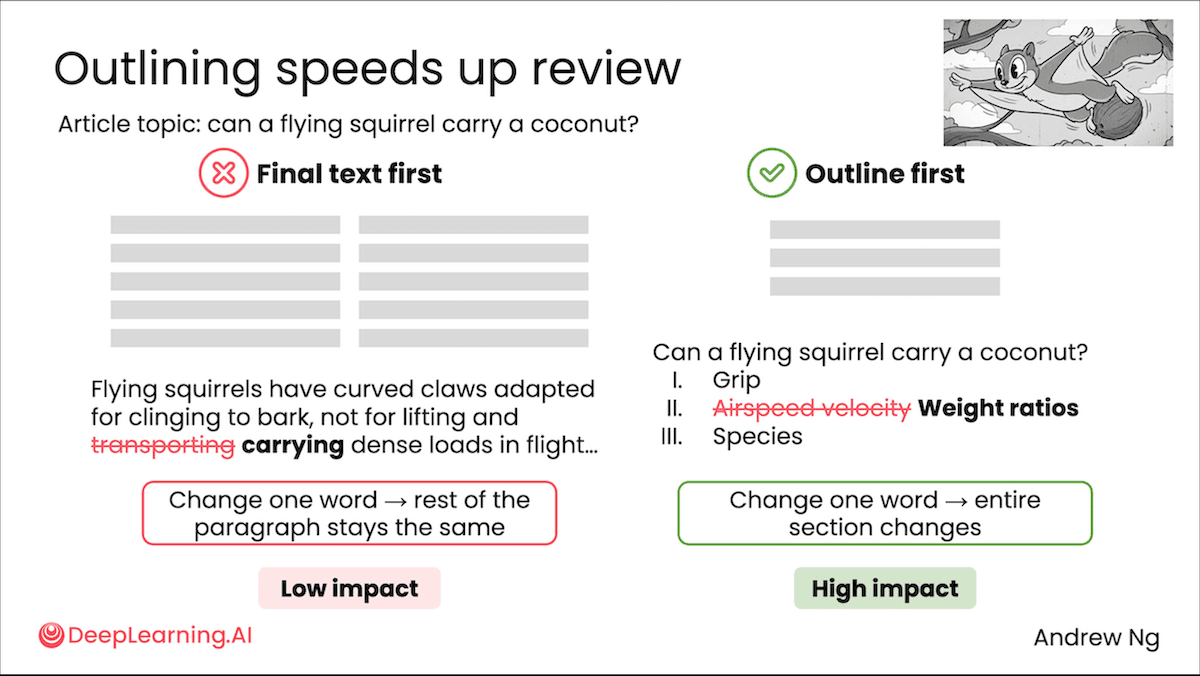

Last week, Andrew talked about the evolution of AI prompting since 2022 and introduced his new course, "AI Prompting for Everyone," which taught skills for effectively using AI tools like ChatGPT and others.

“The ways we prompt AI are very different in 2026 than 2022 when ChatGPT came out. Some people are still using LLMs primarily by asking them short questions. But the models can do much more, like think for minutes, ingest many documents as context, and use web search and other tools.”

Read Andrew’s letter here.

Other top AI news and research covered in depth:

- GPT-5.5 Outperforms, Hallucinates as OpenAI’s latest model tops leaderboards for coding, visual puzzles, and overall intelligence.

- Tech giants including Alphabet, Amazon, Meta, and Microsoft acknowledge AI’s strain on environment, challenging their CO2 pledges.

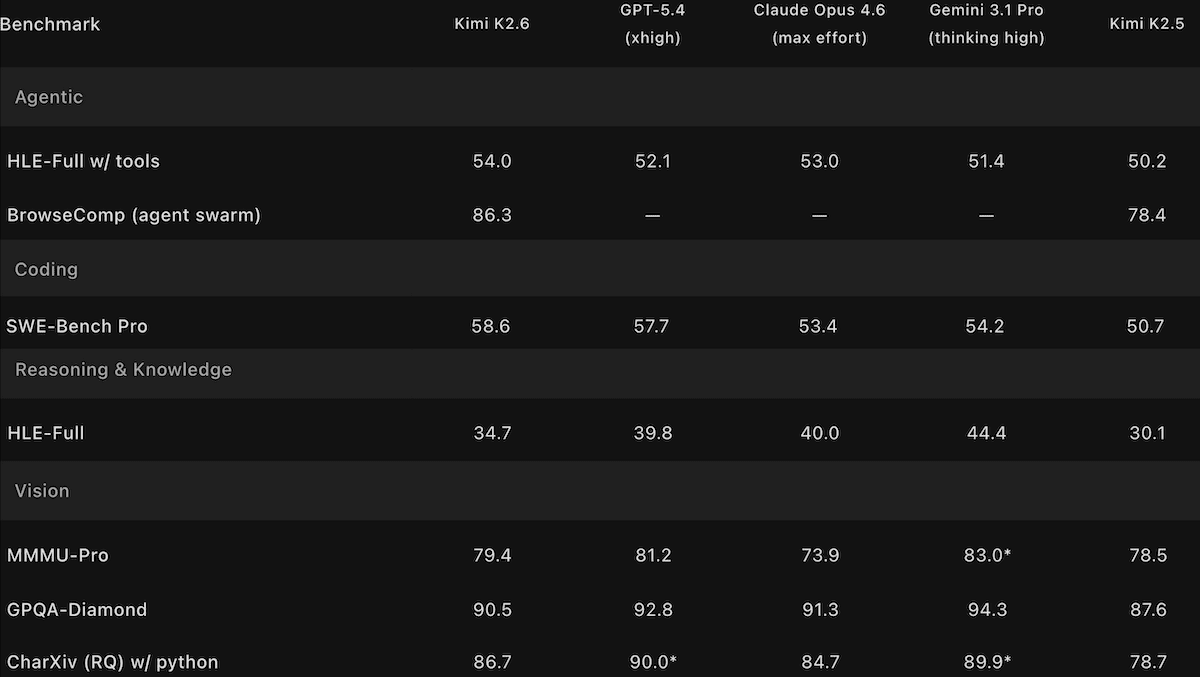

- Kimi K2.6 Challenges Open-Weights Champs by matching open Qwen3.6 Max and DeepSeek V4, though it falls just behind top closed models.

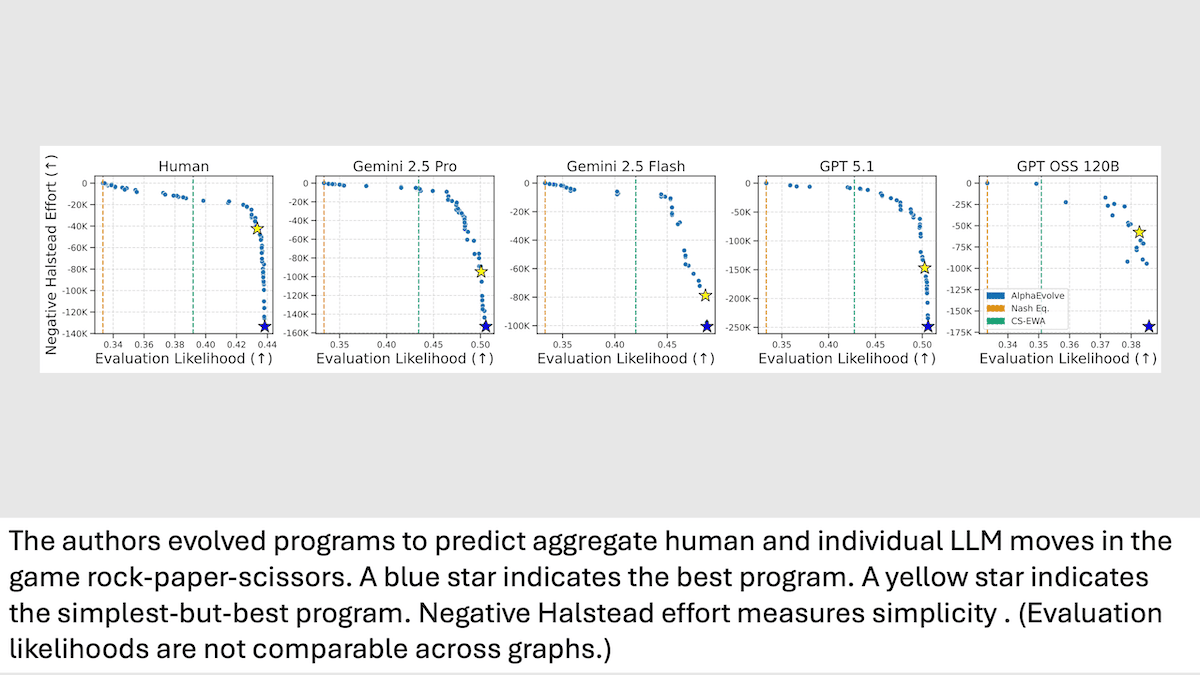

- Researchers at UT-Austin and Google model human decision-making in Rock-Paper-Scissors to compare strategic thinking in LLMs versus humans.

A special offer for our community

DeepLearning.AI recently launched the first-ever subscription plan for our entire course catalog! As a Pro Member, you’ll immediately enjoy access to:

- Over 150 AI courses and specializations from Andrew Ng and industry experts

- Labs and quizzes to test your knowledge

- Projects to share with employers

- Certificates to testify to your new skills

- A community to help you advance at the speed of AI

Enroll now to lock in a year of full access for $25 per month paid upfront, or opt for month-to-month payments at just $30 per month. Both payment options begin with a one-week free trial. Explore Pro’s benefits and start building today!

Data Points is produced by human editors with AI assistance.