Machine Learning Research

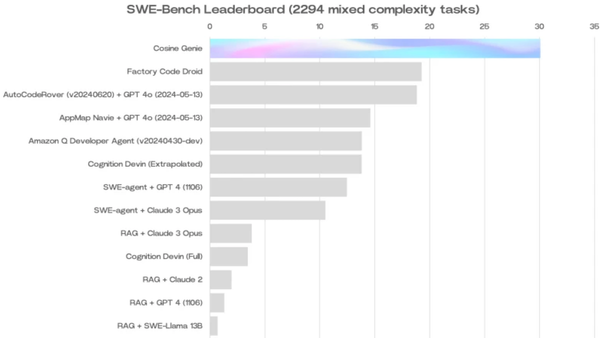

Models Ranked for Hallucinations: Measuring language model hallucinations during information retrieval

How often do large language models make up information when they generate text based on a retrieved document? A study evaluated the tendency of popular models to hallucinate while performing retrieval-augmented generation (RAG).