Machine Learning Research

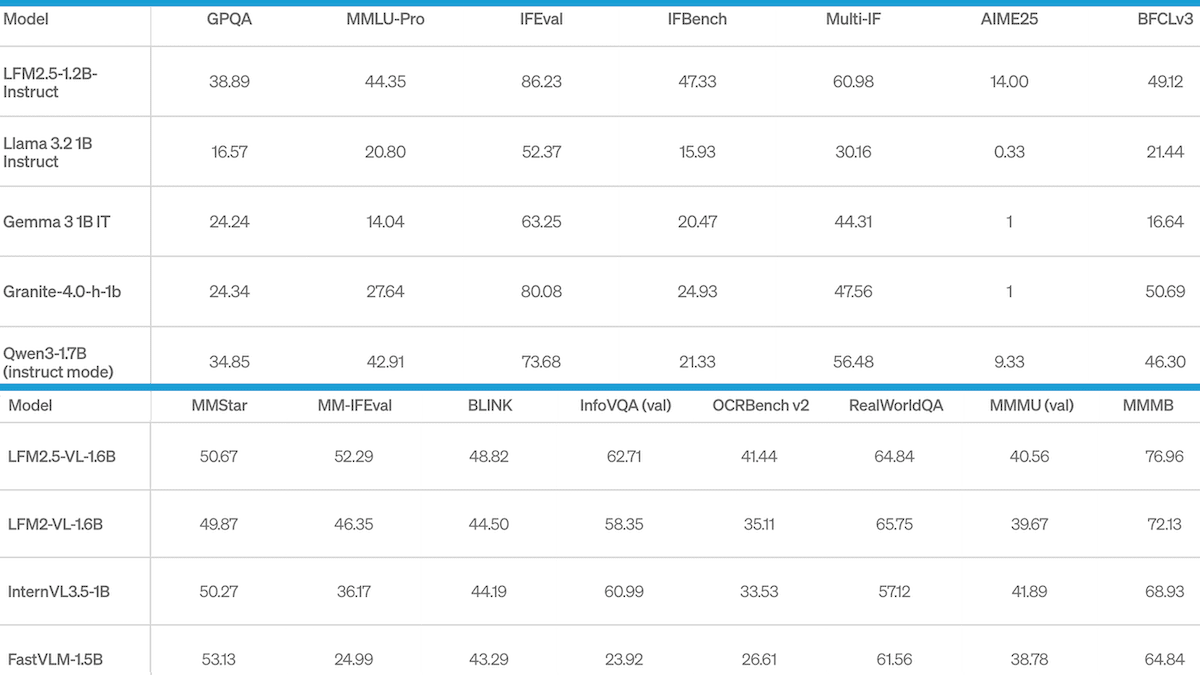

Faster Reasoning at the Edge: Liquid AI’s small reasoning model mixes attention with convolutional layers for efficiency

Reasoning models in the 1 to 2 billion-parameter range typically require more than 1 gigabyte of RAM to run. Liquid AI released one that runs in less than 900 megabytes, and does it with exceptional speed and efficiency.