Building Voice-Enabled Apps is Easier Than You May Think: Voice-enabled AI applications are improving quickly. Get ready for the next wave of AI!

Voice-based AI that you can talk to is improving rapidly, yet most people still don’t appreciate how pervasive voice UIs (user interfaces) will become.

Dear friends,

Voice-based AI that you can talk to is improving rapidly, yet most people still don’t appreciate how pervasive voice UIs (user interfaces) will become. Today, we use a keyboard and mouse to control most desktop and web applications. In the future, I hope we will be able to additionally talk to many of these applications to steer them. I’m particularly excited about the work of Vocal Bridge (an AI Fund portfolio company), where CEO Ashwyn Sharma is leading the way to provide developer tools that enable this.

Every significant UI change has spawned many new applications as well as allowed us to upgrade existing ones. The mouse made point-and-click possible. Touch and swipe gestures enabled new classes of mobile apps. Until recently, voice UIs suffered from high error rates and/or latency, but as they become more reliable, they will open up many new applications.

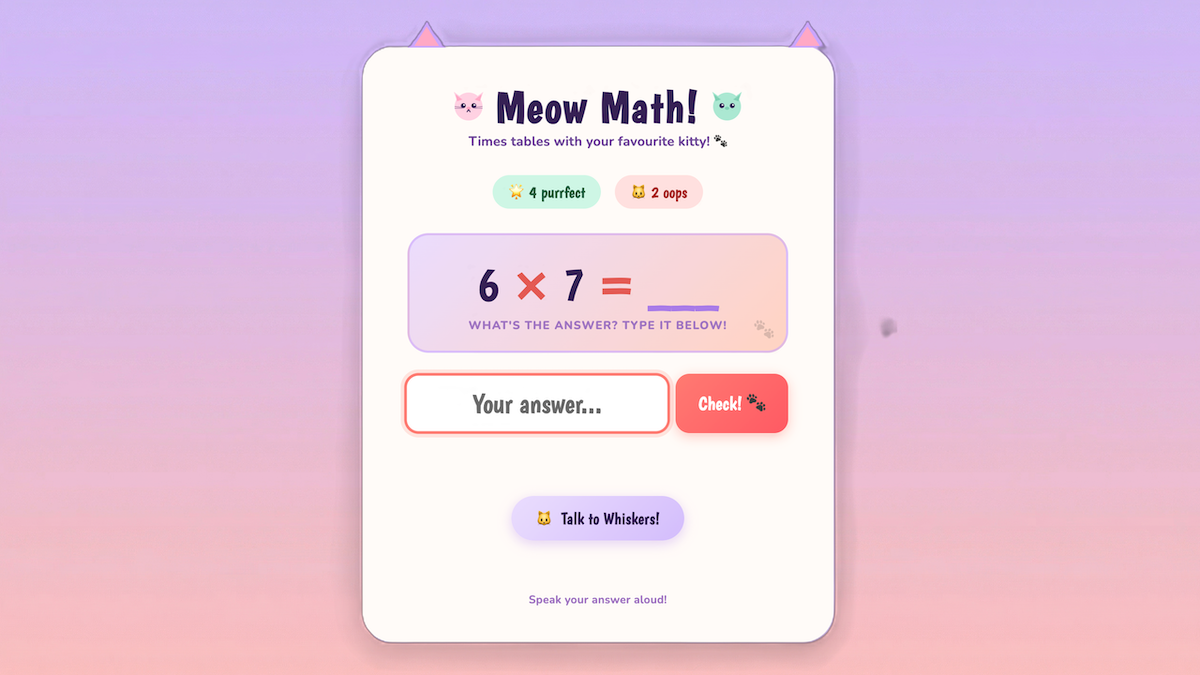

For instance, I had built a simple math-quiz application for my daughter. She has enjoyed using the keyboard to play this game (which shows a cute cat graphic on right answers because she loves cats! 🐱). Adding a voice UI, so it quizzes her verbally in a friendly way and she can respond verbally, removes friction and changes how the experience feels.

The vast majority of people find speaking and listening much easier than writing and reading. Because most developers are highly literate (and so are readers of The Batch), it’s easy to forget how hard many people find writing. Indeed, children who spend time with adults will automatically learn to speak and listen, but unless they are taught explicitly, they will not learn to read or write. Sci-fi movies from the past few decades, like Star Trek, frequently imagine people speaking with computers rather than typing at them. This is a vision of the future worth building toward!

I’ve written about the tradeoff between latency and intelligence. The core problem is that while voice-in-voice-out models have low latency (which is important for verbal communications), they are hard to control and suffer from low reliability/intelligence. In comparison, a pipeline for Speech-to-text → LLM/Agentic AI → Text-to-speech gives high reliability but introduces excessive latency. Vocal Bridge implemented a custom architecture that uses a foreground agent to converse with the user in real time — thus ensuring low latency — and a background agent to manage a complex agentic workflow, reason, apply guardrails, call tools, and whatever else is needed to produce high-quality answers and actions — thus ensuring high intelligence.

I don't expect voice UIs to completely replace older interfaces. Instead, they will complement them, just as the mouse complements the keyboard. In some contexts, such as when working in close proximity to others, users will prefer to type rather than speak. But the potential for voice UIs goes well beyond the currently dominant use cases of automating call centers and providing an alternative to typing. In my math-quiz app, the application can speak and also update the questions and animations shown on the screen in response to spoken (or typed) inputs. This multimodal visual+voice interaction creates a much richer user experience than the voice-only interactions that many voice AI companies have focused on. One key to making it work is a background-agent loop that can bidirectionally receive input from the UI as well as call tools to update the UI.

Building voice UIs is probably easier than you think. Starting from an earlier, non-voice version of my math-quiz app, using Claude Code, it took me less than an hour to add voice capabilities. At a recent hackathon hosted by DeepLearning.AI and AI Fund, developers built voice-powered apps with Vocal Bridge including a clinical trial matcher for cancer patients, a conversational portfolio advisor, and interactive voice layers for existing text-based agents. I was delighted at the creativity that this new UI enables.

Voice UIs will be an important building block for AI applications. Only a minuscule fraction of the world's developers have ever created a voice app, so this is fertile ground for building. If you’d like to try adding voice to an application, try out Vocal Bridge for free here.

Keep building!

Andrew