Thinking Machines debuts a new type of model: Google’s AI mouse promises better human-AI interaction

GitHub’s new Copilot pricing. Baidu’s smaller, faster flagship model. Google’s rethinking of mathematical research. RL Conductor’s orchestration of AI agents.

In today’s edition of Data Points, you’ll learn more about:

- GitHub’s new Copilot pricing

- Baidu’s smaller, faster flagship model

- Google’s rethinking of mathematical research

- RL Conductor’s orchestration of AI agents

But first:

Interaction models yield multimodality with speed

Thinking Machines released a research preview of an “interaction model”: a system trained from scratch for real-time, multimodal conversation, rather than stitching together voice-activity detection and other components as a harness around a standard turn-based model. The core architectural choice is a 200ms micro-turn design: the model continuously interleaves 200ms chunks of input processing with output generation, letting audio, video, and text flow as concurrent streams rather than alternating sequences. This lets the model interrupt, backchannel, translate live speech, or react to visual cues without a separate dialog management layer—behaviors that today require hand-crafted scaffolding that can’t improve as the underlying model scales. For heavier reasoning, the interaction model delegates to an asynchronous background model while the front-end stays present and responsive. On infrastructure, the team implemented streaming sessions in SGLang to handle frequent small prefills without typical LLM inference latency, and contributed batch-invariant kernels for training stability. (Thinking Machines)

Google announces Magic Pointer for easier computer use

Google DeepMind developed an AI-enabled mouse pointer powered by Gemini that understands what users are pointing at, eliminating the need for detailed text prompts. The system captures visual and semantic context around the cursor, letting users make requests through simple gestures and voice—“Fix this” or “Show me directions”—rather than elaborate instructions. The pointer works across applications without forcing users into separate AI windows. Google is already integrating it into Chrome, where users can select webpage elements and ask Gemini to compare or visualize them, and plans to roll out a “Magic Pointer” feature in its new Googlebook laptop. The approach rests on four principles: maintaining user flow across apps, understanding context visually, enabling natural shorthand speech, and converting pixels into actionable entities like dates, places, and objects. (Google)

GitHub revises Copilot pricing tiers and usage limits

GitHub is restructuring Copilot individual pricing ahead of the June 1st shift to usage-based billing. Pro and Pro+ now include more total monthly usage at the same price—$15 and $70 respectively—through a two-tier system: base credits matched 1:1 to subscription price, plus a variable flex allotment on top. A new Max plan at $100/month offers $200 in total monthly usage for sustained, high-volume work. The flex component adjusts over time as model costs and efficiency improve; base credits stay fixed. Code completions remain unlimited on all paid plans and don’t consume credits. (GitHub)

ERNIE 5.1 drastically shrinks ERNIE 5 for speed and price

Baidu released ERNIE 5.1, a compressed derivative of ERNIE 5.0 that cuts total parameters to roughly one-third while maintaining impressive performance. The model used only six percent of typical pre-training compute, achieved through an elastic training framework that simultaneously optimizes sub-models across varying depths, expert capacities, and routing sparsity. ERNIE 5.1 ranks fourth globally and first among Chinese models on the Arena Search leaderboard with a score of 1,223. Post-training relies on a disaggregated reinforcement learning infrastructure that decouples training, inference, and reward systems—each scales independently in a fully asynchronous pipeline. The model scores 99.6 on AIME26 math problems with tool use, trailing only Gemini 3.1 Pro, and approaches leading closed-source models on GPQA and MMLU-Pro. (Baidu)

Co-Mathematician adds AI to human experts, tops benchmarks

Google researchers built an interactive workbench where mathematicians and AI agents collaborate on open research problems through a shared, evolving workspace. The system doesn’t chase end-to-end autonomy—it mirrors how real mathematical work actually happens: messier, more iterative, full of detours and backtracks. Users ideate, search literature, explore computations, and refine questions while asynchronous agent teams run in parallel, always stoppable. At the center sits a living “working paper” capturing hypotheses, dead ends, and the reasoning behind each claim through margin notes. The full working history is preserved; researchers can steer at any moment. Early testing found mathematicians solving open problems and catching references the literature had missed. On FrontierMath Tier 4 the system reached 48 percent, higher than any other evaluated approach. The deeper bet is that AI’s role in mathematics isn’t to replace human insight but to handle the grinding parallel work that slows discovery: the literature hunts, the computational checks, the dead-end explorations that narrow what’s worth thinking about. (ArXiv)

Using a smaller language model to orchestrate more powerful agents

Researchers trained a seven-billion-parameter model called the RL Conductor to automatically design and execute multi-agent workflows by generating natural language instructions for other LLMs. Instead of hand-crafted prompts, the Conductor learns through reinforcement learning to decompose problems, assign subtasks to specialized workers, and specify which agents see each other’s outputs—all encoded as structured Python lists. A seven-billion-parameter Conductor beats individual workers, reaches state-of-the-art results on LiveCodeBench and GPQA Diamond, and makes fewer agent calls than manually designed systems. The researchers pushed further by training the Conductor on randomized agent pools so it generalizes to any combination of open and closed models, and let it recursively call itself as a worker—creating a new dimension for scaling computation at test time. The finding: effective coordination strategies emerge naturally from end-to-end reward optimization rather than human-designed scaffolding. (ArXiv)

Want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

Last week, Andrew talked about dispelling the myth of an AI jobpocalypse, emphasized that AI led to job creation rather than large-scale unemployment, and highlighted the importance of adapting to new AI-driven roles.

“Contrary to the predictions of an AI jobpocalypse, I predict the opposite: There will be an AI jobapalooza! AI will lead to a lot more good AI engineering jobs, and I’m also optimistic about the future of the overall job market.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

- ByteDance is aiming for video dominance by adding state-of-the-art Seedance 2.0 video to Capcut, while OpenAI pulls back from similar advancements.

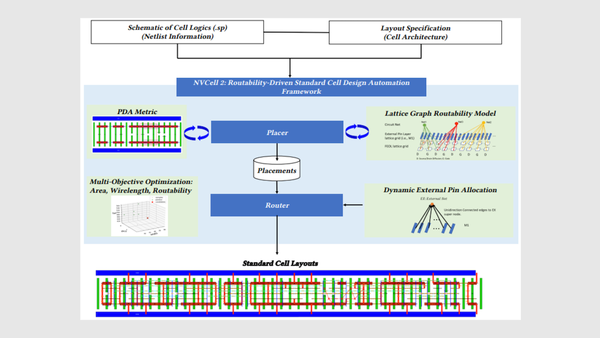

- Nvidia is leveraging AI to design chips with models that create circuits, verify designs, and test new layouts, pushing the boundaries of semiconductor innovation.

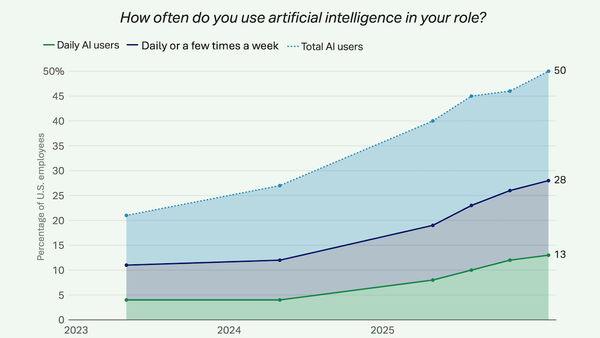

- A Gallup poll reveals that while AI boosts productivity in the workplace, a significant number of workers have yet to experience its benefits firsthand.

- Sony, in collaboration with university researchers, is advancing robotics by training robots to learn new tasks without catastrophic forgetting, enhancing their adaptability and efficiency.

A special offer for our community

In case you missed it, DeepLearning.AI launched our first-ever subscription plan for our entire course catalog! As a Pro Member, you’ll immediately enjoy access to:

- Nearly 200 AI short and long courses from Andrew Ng and industry experts

- Labs and quizzes to test your knowledge

- Projects to share with employers

- Certificates to testify to your new skills

- A community to help you advance at the speed of AI

Enroll now to lock in a year of full access for $25 per month paid upfront, or opt for month-to-month payments at just $30 per month. Both payment options begin with a one-week free trial. Explore Pro’s benefits and start building today!

Data Points is produced by human editors with AI assistance.