Qwen3.5 Small tops mobile-sized open models: Google introduces its own small model, Gemini 3.1-Flash-Lite

Claude’s expanded memory feature, including imports. GPT-5.3 Instant, a terse update to ChatGPT. How LLMs can unmask pseudonymous online users. Alibaba’s Qwen leadership losses soon after 3.5 release.

In today’s edition of Data Points, you’ll learn more about:

- Claude’s expanded memory feature, including imports

- GPT-5.3 Instant, a terse update to ChatGPT

- How LLMs can unmask pseudonymous online users

- Alibaba’s Qwen leadership losses soon after 3.5 release

But first:

Qwen3.5 Small models match or beat larger open competitors

Alibaba released the Qwen3.5 Small model series, consisting of four AI models ranging from 0.8 billion to 9 billion parameters that run on standard laptops and mobile devices. The largest, Qwen3.5-9B, achieves a score of 81.7 on the GPQA Diamond graduate-level reasoning benchmark, surpassing OpenAI’s gpt-oss-120B (80.1) despite being 13.5 times smaller, and leads in multimodal tasks with 70.1 on MMMU-Pro visual reasoning versus Gemini 2.5 Flash-Lite’s 59.7. (Although see Gemini 3.1 Flash-Lite below.) Qwen’s small models use a hybrid architecture combining Gated Delta Networks with sparse Mixture-of-Experts and native multimodal training through early fusion, enabling the 4B and 9B versions to handle video analysis, document parsing, and UI navigation tasks previously requiring models ten times larger. All weights are available under Apache 2.0 licenses on Hugging Face and ModelScope, allowing unrestricted commercial use and customization. The efficiency gains shift which model sizes developers can deploy for production agentic workflows — tasks like automated coding, visual workflow automation, and real-time edge analysis now run locally without cloud API costs or latency. (Hugging Face)

Lightweight Flash model boosts performance at lower costs

Google introduced Gemini 3.1 Flash-Lite, a cost-optimized model designed for high-volume developer workloads, now available in preview via Google AI Studio and Vertex AI. Priced at $0.25 per million input tokens and $1.50 per million output tokens, the model achieves 2.5X faster time to first answer token and 45 percent faster output speed than Gemini 2.5 Flash while maintaining similar or better quality. On industry benchmarks, Flash-Lite scores 1432 on Arena.AI’s leaderboard and outperforms larger Gemini models from prior generations, reaching 86.9 percent on GPQA Diamond and 76.8 percent on MMMU Pro despite its smaller footprint. The model ships with adjustable thinking levels, allowing developers to control reasoning depth for managing costs for tasks like high-frequency translation and content moderation to more complex ones like UI generation and multi-step agent execution. Observers noted that while the new Flash-Lite costs less than Flash or Pro, it costs more than earlier iterations of Flash-Lite (Google)

Claude adds memory import for AI model switchers

Anthropic released memory import and export functionality for all Claude users across free, Pro, Max, Team, and Enterprise plans on web and desktop. Users can transfer their memory profile from other AI providers into Claude using a guided import flow, or export their Claude memory for backup or migration to competing services. The import process involves exporting memory from the current provider using a provided prompt template, pasting the results into Claude’s import interface, and allowing Claude to extract and store key information as individual memory edits within 24 hours. Claude’s memory system prioritizes work-related context and may filter out personal details unrelated to professional use, though users can manually add specific information through the memory editor. Anthropic notes that memory imports remain experimental and in active development, with no guarantee that all imported memories will be successfully incorporated. This portability feature reduces switching costs for users migrating between AI services and allows them to preserve accumulated context rather than starting fresh. (Anthropic)

GPT-5.3 Instant prioritizes natural conversation over caution

OpenAI released GPT-5.3 Instant, an update focused on improving everyday conversational quality by reducing unnecessary refusals, eliminating overly cautious preambles, and adopting a more natural tone. The model reduces hallucination rates by 26.8 percent in high-stakes domains like medicine and law when using web search, and 19.7 percent without web access. Web search integration now better contextualizes results with internal knowledge rather than simply listing links, surfacing more relevant information upfront. The update addresses problems that don’t surface in traditional benchmarks but directly affect whether users perceive ChatGPT as helpful or frustrating in daily use. GPT-5.3 Instant is available now across ChatGPT free and paid tiers and via OpenAI’s API, with GPT-5.2 Instant supported until June 3, 2026. (OpenAI)

Frontier models can easily break online anonymity

Researchers at MATS, Anthropic, and ETH Zurich showed that large language models can autonomously unmask pseudonymous online accounts by extracting identity-relevant features from unstructured text, searching for candidate matches via semantic embeddings, and reasoning over evidence to verify identities. Testing on three datasets (matching Hacker News to LinkedIn profiles, linking Reddit users across communities, and splitting single users’ Reddit histories) the LLM-based pipeline achieved up to 68 percent recall at 90 percent precision, compared to near-zero performance for classical methods. Autonomous agents correctly re-identified 226 of 338 Hacker News users whose LinkedIn profiles were hidden from the system, and matched at least nine of 125 participants in the Anthropic Interviewer dataset by analyzing interview transcripts alone. The four-stage attack pipeline uses LLMs to profile users, extract features, search candidates, and reason about matches with calibrated confidence scores, operating on raw text across arbitrary platforms without requiring structured data. The attacks cost between $1 and $4 per profile to execute, reducing what previously required hours of manual investigation by skilled researchers to automated queries completed in minutes, effectively undermining the practical obscurity that has protected online accounts. (arXiv)

Three senior executives leave Alibaba’s Qwen AI division in 2026

Alibaba Group lost three senior leaders from its Qwen artificial intelligence division within two months. Lin Junyang, head of the Qwen model division, and Yu Bowen, who led post-training, both resigned on Wednesday, following the January departure of staff research scientist Hui Binyuan. The exits came two days after Qwen released updated products and as the division’s mobile app reached 203 million monthly active users in February, up from 31 million in January. Qwen now ranks third globally behind ChatGPT and ByteDance’s Doubao app. Alibaba has released over 400 open-source Qwen models since 2023, with more than 1 billion downloads. (Reuters)

Still want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

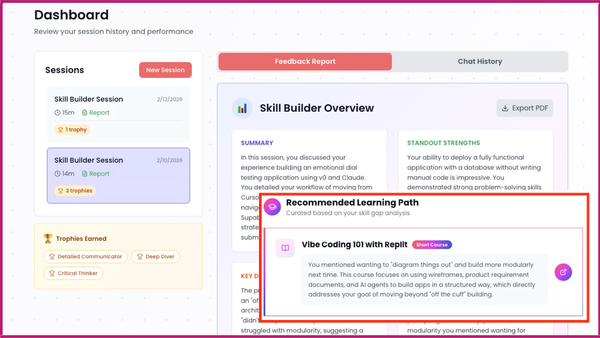

Last week, Andrew Ng talked about the release of the Skill Builder tool designed to help individuals assess and enhance their AI skills, and the importance of having guidance in navigating the rapidly evolving AI job landscape.

“When I’m learning a new skill, I find it hard to understand where I stand in the field, since I don’t yet know what I don’t know. Skill Builder addresses this for AI skills. It’s free for everyone to use, and many have reported finding the conversations informative.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

- Gemini Took the Lead as Google released Gemini 3.1 Pro in preview, topping the Intelligence Index at the same price.

- Global AI Summit Showed Optimism with India presenting itself as an AI counterweight to the U.S. and China.

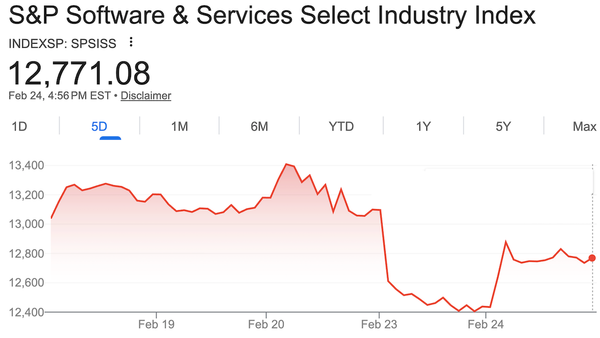

- Investors Panicked Over Agentic AI as Claude Cowork plugins triggered a SaaS stock selloff, though partnerships led to a slight rebound.

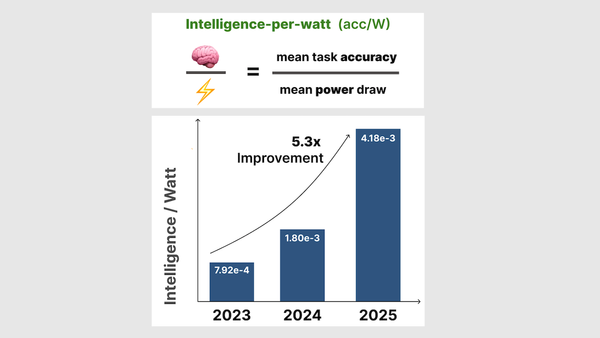

- Researchers from Stanford and Together.AI explored whether local AI could stand in for the cloud by charting edge models’ performance in intelligence per watt.

A special offer for our community

DeepLearning.AI recently launched the first-ever subscription plan for our entire course catalog! As a Pro Member, you’ll immediately enjoy access to:

- Over 150 AI courses and specializations from Andrew Ng and industry experts

- Labs and quizzes to test your knowledge

- Projects to share with employers

- Certificates to testify to your new skills

- A community to help you advance at the speed of AI

Enroll now to lock in a year of full access for $25 per month paid upfront, or opt for month-to-month payments at just $30 per month. Both payment options begin with a one week free trial. Explore Pro’s benefits and start building today!