Perplexity Computer hits desktop, mobile, Pro: Google releases Aletheia, its top natural language math agent

DeepSeek testing favors local chipmakers. Nvidia’s reasoning-added retrieval method. Why OpenAI and Oracle shut down Stargate expansion. Meta’s giant deal with AI cloud provider Nebius.

In today’s edition of Data Points, you’ll learn more about:

- DeepSeek testing favors local chipmakers

- Nvidia’s reasoning-added retrieval method

- Why OpenAI and Oracle shut down Stargate expansion

- Meta’s giant deal with AI cloud provider Nebius

But first:

Perplexity expands Computer agent to desktop, mobile, enterprise

Perplexity announced a suite of expansions to its Perplexity Computer platform, which orchestrates 20 top AI models with web access to operate across tools autonomously. Personal Computer runs on dedicated Mac Mini hardware to access local files and apps while maintaining audit trails and kill-switch controls. Computer for Enterprise connects to existing business tools like Snowflake and Salesforce. The company also released Comet Enterprise, an AI-native browser with granular permissions and CrowdStrike integration for organizations, and launched four new APIs (Search, Agent, Embeddings, and Sandbox) that provide developers the same infrastructure powering the Computer product. Perplexity Finance gained access to 40 live financial data tools pulling directly from SEC filings, FactSet, and other authoritative sources, while Premium Sources integrations with Statista, CB Insights, and PitchBook bring paywalled research data into the platform. These products position agentic AI systems as a replacement for traditional software, moving beyond search toward persistent, autonomous task execution. (Perplexity)

Google’s Aletheia natural-language math agent officially released

Google introduced Aletheia, an AI agent built on Gemini Deep Think that moves beyond competition mathematics to conduct independent research. The system uses a three-stage agentic loop (Generator, Verifier, and Reviser) to iteratively construct and validate mathematical proofs in natural language, with explicit verification as a separate step proving critical for catching errors the generator initially misses. Aletheia achieved 95.1 percent accuracy on the IMO-Proof Bench Advanced benchmark, up from the previous record of 65.7 percent, while the January 2026 version of Deep Think reduced compute requirements for Olympiad-level problems by 100x through inference-time scaling. The system integrates Google Search and web browsing to prevent citation hallucinations when synthesizing mathematical literature. The agent has already contributed to peer-reviewed research: it generated an autonomous paper on eigenweight structure constants (Feng26), provided strategic guidance for a collaborative proof on independent set bounds (LeeSeo26), and resolved four open questions from the Erdős Conjectures database when tested against 700 problems. (MarkTechPost)

DeepSeek snubs Nvidia and AMD, favors domestic chipmakers

DeepSeek withheld pre-release access to its upcoming V4 AI model from Nvidia and AMD, breaking with standard industry practice where AI companies collaborate with chipmakers for optimization. The Chinese AI firm instead provided early access to domestic partners including Huawei Technologies weeks in advance, allowing Chinese chipmakers time to optimize software for their processors. A senior U.S. official told Reuters that DeepSeek's latest model may have been trained using Nvidia's Blackwell chips in China, potentially violating U.S. export controls, and suggested the company might claim it used Huawei hardware instead. DeepSeek gained prominence after releasing a low-cost AI model that challenged U.S. dominance, with its models downloaded over 75 million times on Hugging Face. The exclusion of U.S. chipmakers signals China’s broader strategy to reduce dependence on American technology and strengthen its domestic AI and semiconductor ecosystem amid ongoing trade tensions over advanced chip access. (Times of India)

Nvidia builds agentic retrieval pipeline that adds reasoning to semantic search

Nvidia NeMo Retriever achieved the top position on the ViDoRe v3 pipeline leaderboard and second place on the BRIGHT benchmark using a single agentic retrieval architecture. The system employs a ReACT-based loop where an LLM agent iteratively queries, evaluates, and refines searches using tools like think, retrieve, and final_results, dynamically adapting its strategy rather than relying on dataset-specific heuristics. The pipeline pairs Claude Opus 4.5 with nemotron-colembed-vl-8b-v2 embeddings to score 69.22 NDCG@10 on ViDoRe, outperforming specialized solutions that excel on single benchmarks but struggle across domains. Nvidia replaced an initial Model Context Protocol server architecture with a thread-safe singleton retriever to eliminate deployment complexity and network overhead, though each query still averages 136 seconds and consumes roughly 760,000 input tokens. The team plans to distill these reasoning patterns into smaller open-weight models to reduce latency and cost while maintaining accuracy. (Hugging Face)

OpenAI’s planned Stargate data center expansion canceled

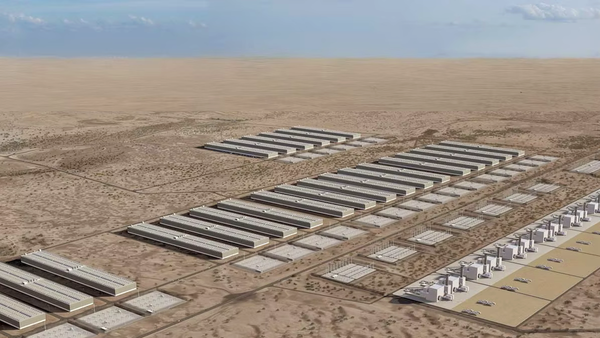

OpenAI and Oracle have stopped plans to expand their flagship data center campus in Abilene, Texas, after negotiations over financing and capacity projections broke down. The two companies had discussed increasing the facility’s power capacity from 1.2 GW to 2.0 GW since mid-2025, but changing forecasts and difficult financing terms ended the expansion discussions. Reliability issues also strained the partnership: Winter weather disrupted liquid-cooling infrastructure earlier this year, forcing multiple buildings offline for several days. Despite the cancelled expansion, Oracle and OpenAI’s broader partnership remains intact, with the companies still pursuing a separate agreement to develop 4.5 GW of capacity announced in July of last year. Nvidia reportedly intervened to keep the Abilene site viable for its hardware by providing data center operator Crusoe with a $150 million deposit and helping attract Meta as a potential tenant for the unused expansion capacity. Microsoft is another possible tenant, but neither company has confirmed its interest. (Tom’s Hardware)

Meta makes largest-ever cloud deal with Nebius

Meta signed a long-term agreement worth up to $27 billion with Dutch cloud provider Nebius to secure AI infrastructure capacity over the next five years. The deal includes $12 billion in dedicated compute capacity across multiple locations, featuring Nvidia’s latest Vera Rubin AI chips in what Nebius describes as one of the chips’ first large-scale deployments, plus an additional $15 billion option for available capacity. The agreement reflects rapidly growing competition among hyperscalers (including Meta, Amazon, Alphabet, and Microsoft) to secure infrastructure for AI workloads, with Meta alone planning $115 billion to $135 billion in AI-related capital spending this year. Nebius has established itself as a leading European AI cloud provider and now holds major contracts with both Meta and Microsoft (worth $19.4 billion), positioning it as a critical infrastructure supplier in the AI and cloud markets. (CNBC)

Want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

Last week, Andrew Ng talked about the introduction of the Context Hub (chub) to offer current API documentation to coding agents, the idea of a Stack Overflow-style platform for AI agents to exchange feedback, and the swift expansion of Moltbook, a social network for agents.

“A key part of the vision for chub was getting feedback from coding agents that can help other agents. Specifically, if an agent obtains a piece of documentation, tries it out, and discovers a bug, finds a superior way to use an API, or realizes the documentation is missing something, feedback reflecting these learnings can be very useful for humans updating the documentation.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

- OpenAI’s GPT-5.4 Pro and GPT-5.4 Thinking challenged Google’s Gemini 3.1 Pro Preview as the best all-around AI model, boasting higher performance at a higher price.

- The State of Mobile 2026 Report revealed that AI chatbot, search, and assistant growth was skyrocketing, outpacing traditional mobile sectors like gaming and social media.

- AI companies are building their own power plants to create data centers that operate off the grid, reducing reliance on public utilities.

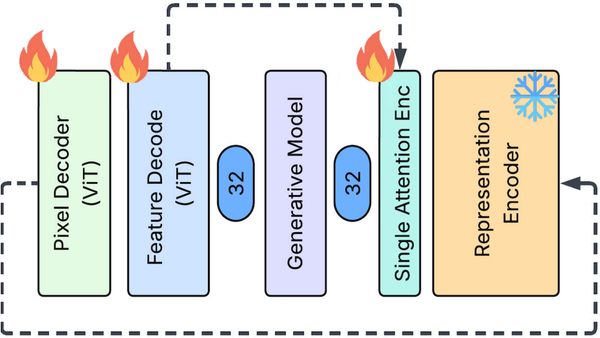

- Researchers introduced the Feature Auto-Encoder, a diffusion image generator that shrunk embeddings for faster processing and enabled lightning-fast diffusion learning.

Attention!

A special event for our community

Andrew Ng and DeepLearning.AI are hosting AI Dev 26 × San Francisco, a two-day conference for AI developers taking place April 28–29 at Pier 48.

Join 3,000+ engineers, researchers, and builders working on modern AI systems. The program includes top speakers, developer relations experts, and engineers from companies including Google, AMD, Oracle, Neo4j, and Snowflake (and of course DeepLearning.AI), all sharing their latest technologies and explaining how they’re building and deploying AI systems today.

At AI Dev 26, you’ll find:

- Technical talks from engineers building AI systems in production

- Hands-on workshops exploring new tools and techniques

- Live demos from startups and AI builders

- Opportunities to meet other developers and companies in the space

Get your ticket with a special discount!

Data Points is produced by human editors with AI assistance.