Nvidia’s open AI models go quantum: Suspect arrested for attack on OpenAI HQ and CEO’s home

Muse Spark, Meta’s model for AI glasses, social media, and more. Rubber Duck, Copilot’s tool to make GPT-5.4 check Claude’s work. Claude Managed Agents, a built-in harness for enterprise tools. GPT-5.4-Cyber, OpenAI’s new not-for-public cybersecurity model.

In today’s edition of Data Points, you’ll learn more about:

- Muse Spark, Meta’s model for AI glasses, social media, and more

- Rubber Duck, Copilot’s tool to make GPT-5.4 check Claude’s work

- Claude Managed Agents, a built-in harness for enterprise tools

- GPT-5.4-Cyber, OpenAI’s new not-for-public cybersecurity model

But first:

Nvidia applies AI to longstanding problems in quantum computing

Nvidia released Ising, a family of open AI models designed to solve two core bottlenecks in quantum computing: processor calibration and error correction. The calibration model uses a vision language model to interpret quantum processor measurements, cutting calibration time from days to hours through automated AI agents. The decoding models (optimized for either speed or accuracy) handle real-time quantum error correction up to 2.5 times faster and three times more accurately than pyMatching, the current standard. More than a dozen quantum computing companies and research labs, including Fermilab, Harvard, IQM Quantum Computers, and the UK’s National Physical Laboratory, are already deploying the models. Nvidia is positioning AI as the "operating system" for quantum machines, bridging fragile qubits to production-ready hybrid quantum-classical systems. (Nvidia)

OpenAI CEO’s home and headquarters attacked: nobody hurt, suspect charged

A 20-year-old Texas man was arrested and charged after throwing a Molotov cocktail at OpenAI CEO Sam Altman’s San Francisco residence and attempting to set fire to the company’s headquarters. Daniel Moreno-Gama was captured on surveillance video and carried a self-authored anti-AI document containing threats against Altman when police apprehended him. Investigators recovered multiple incendiary devices, kerosene, and a lighter, and Moreno-Gama told security personnel he intended to burn the building and harm people inside. U.S. Attorney Craig Missakian said that if evidence shows the attacks were meant to change public policy or coerce officials, the case will be prosecuted as domestic terrorism. The charges carry a mandatory minimum of five years and up to 20 years in prison on the explosives count alone. (Reuters)

Meta starts a smaller, currently-closed AI model series for its apps and devices

Meta announced Muse Spark, a small-but-capable language model designed as the foundation for a new Muse series. The model powers an upgraded Meta AI assistant with two modes (one for quick answers, one for complex reasoning) and can launch multiple subagents in parallel to tackle multifaceted questions like trip planning. Muse Spark includes strong multimodal perception, letting the assistant analyze images and conversations, capabilities tailor-built for Meta’s AI glasses. The assistant also integrates shopping and discovery features powered by content already within Meta’s apps, alongside local context and trending topics pulled from community posts. The model is limited to Meta’s own apps for now, beginning with AI glasses in the US immediately and expanding to Instagram, Facebook, Messenger, and WhatsApp in coming weeks, with private API access to select partners and plans to make future versions free to download. (Meta)

GitHub Copilot pairs Claude with GPT-5.4 for two-pass review

GitHub introduced Rubber Duck, an experimental feature in Copilot CLI that uses a second AI model from a different family to review coding agent decisions before execution. When you use Claude as your primary model, GPT-5.4 acts as an independent reviewer, flagging architectural flaws, edge cases, and assumptions the original model might miss. Testing on SWE-Bench Pro showed Claude Sonnet paired with Rubber Duck closed 74.7 percent of the performance gap to Claude Opus alone, with particularly strong results on complex multi-file problems spanning 70+ steps. Rubber Duck activates automatically at critical checkpoints — after planning, after complex implementations, and before test execution— or on demand when you ask for critique. The design choice to invoke it sparingly at high-signal moments keeps it from adding friction to the workflow. (GitHub)

Claude will give you a harness to manage your own agents

Anthropic released Claude Managed Agents, a platform that provides developers with pre-built infrastructure to deploy autonomous AI systems without building the underlying harness themselves. The tool includes an agent harness, sandboxed execution environments, multi-hour cloud runtime, and monitoring dashboards, handling what head of engineering Katelyn Lesse calls “a complex distributed-systems engineering problem” that previously required dedicated teams. Notion demoed the platform running a client onboarding workflow, where agents ticked through task lists while developers monitored progress through a Claude Platform dashboard. The timing aligns with Anthropic’s push into enterprise: the company hit $30 billion in annualized recurring revenue, triple its December figure, as it races OpenAI to build out business offerings ahead of potential IPOs this year. (Wired)

GPT-5.4-Cyber, another security-focused model too hot to handle

OpenAI expanded its Trusted Access for Cyber (TAC) program to thousands of individual security professionals and hundreds of teams defending critical infrastructure. The expansion includes GPT-5.4-Cyber, a model fine-tuned specifically for defensive cybersecurity work with lowered refusal boundaries and new capabilities like binary reverse engineering. OpenAI is deploying this more permissive model iteratively, starting with vetted security vendors and researchers, while maintaining broader access to standard models with safeguards for general users. As model capabilities advance, OpenAI says it’s scaling both cyber-specific defenses and defender access in parallel, rather than waiting for a single threshold where AI becomes too dangerous to deploy. Codex Security, the vulnerability-finding tool launched earlier this year, has already contributed to fixing over 3,000 critical and high-severity vulnerabilities across the ecosystem. (OpenAI)

Still want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

Last week, Andrew Ng talked about the future of software engineering, emphasized the Product Management Bottleneck and the impact of AI on job markets, and discussed the upcoming AI Developer Conference.

“Among professions, AI is accelerating software engineering most, given the rise of coding agents. According to a new report by Citadel Research, software engineering job postings are rising rapidly. So if software engineering is a harbinger of the impact AI will have on other professions, this expansion of software engineering jobs is encouraging.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

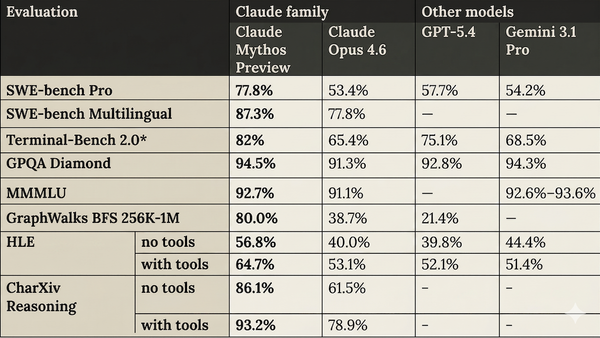

- Claude’s advanced Mythos Preview model raised security worries, leading to its limited-release-only status.

- Blind people using AI models as virtual mirrors encountered unexpected challenges, revealing pitfalls in assistive technology.

- Google’s AlphaGenome unveiled dark DNA, providing insights into DNA that regulates genetic expression.

- A new dynamic fluids model solved transformers’ pixellation problem, advancing our understanding of how liquids and gases behave.

Last chance!

An important event for our community

Andrew Ng and DeepLearning.AI are hosting AI Dev 26 × San Francisco, a two-day conference for AI developers taking place April 28–29 at Pier 48.

Join 3,000+ engineers, researchers, and builders working on modern AI systems.

The program includes top speakers, developer relations experts, and engineers from companies including Google, AMD, Oracle, Neo4j, and Snowflake (and of course DeepLearning.AI), all sharing their latest technologies and explaining how they’re building and deploying AI systems today.

At AI Dev 26, you’ll find:

- Technical talks from engineers building AI systems in production

- Hands-on workshops exploring new tools and techniques

- Live demos from startups and AI builders

- Opportunities to meet other developers and companies in the space

Get your ticket with a special discount!

Data Points is produced by human editors with AI assistance.