MiniMax’s M2.7 model competes with Gemini and Opus: Cursor’s Composer 2 does the same, but for code

Microsoft’s latest image model. Google’s Stitch, a platform for designers. Claude Code Channels, another step towards OpenClaw parity. Anthropic’s denial of a back door into defense operations.

In today’s edition of Data Points, you’ll learn more about:

- Microsoft’s latest image model

- Google’s Stitch, a platform for designers

- Claude Code Channels, another step towards OpenClaw parity

- Anthropic’s denial of a back door into defense operations

But first:

MiniMax M2.7 goes proprietary, boosts its benchmark scores

Chinese AI startup MiniMax released M2.7, a proprietary reasoning model that autonomously handled 30 to 50 percent of its own reinforcement learning development workflow by building research agent harnesses, managing data pipelines, and optimizing its programming performance over 100-plus iterative rounds. The model achieved a 66.6 percent medal rate on MLE Bench Lite machine learning competitions, tying with Gemini 3.1 and approaching Claude Opus 4.6’s performance. M2.7 costs 0.30 dollars per million input tokens and 1.20 dollars per million output tokens through the MiniMax API, with the company claiming it runs at less than one-third the cost of GLM-5 at equivalent intelligence levels. The model integrates with over 11 developer tools including Claude Code, Cursor, and Zed, and scored 56.22 percent on the SWE-Pro software engineering benchmark while reducing hallucination rates to 34 percent compared to 46 percent for Claude Sonnet 4.6. The shift to a proprietary model marks the second recent instance of leading Chinese AI startups moving away from open source releases, following z.ai’s GLM-5 Turbo, potentially signaling a strategic pivot in an industry segment that had become known for open weights contributions. (VentureBeat)

Cursor’s agentic model offers frontier capabilities at low cost

Cursor released Composer 2, a coding model available exclusively within its agentic coding environment, with substantial price reductions and benchmark improvements. Composer 2 Standard costs $0.50/$2.50 per million input/output tokens—86 percent cheaper than Composer 1.5—while the faster Composer 2 Fast variant costs $1.50/$7.50 and is now the default experience. The model scores 61.7 on Terminal-Bench 2.0 and 73.7 on SWE-bench Multilingual, outperforming Opus 4.6 but trailing GPT-5.4. Cursor frames Composer 2 not as a universal benchmark leader but as a long-horizon agentic model tuned specifically for Cursor’s workflows. Soon after Composer 2’s release, Moonshot revealed that the model 2 was based on a fine-tuned version of Kimi 2.5 as part of an official partnership. (VentureBeat and X)

Microsoft updates its image model to rival Google and OpenAI

Microsoft announced MAI-Image-2, a text-to-image generation model that ranks third on the Arena.ai leaderboard among research labs. The model is now available in the MAI Playground for testing, with rollout beginning on Copilot and Bing Image Creator. API access is available now for select Microsoft customers and will open to all developers on Microsoft Foundry soon. The model emphasizes three capabilities developed with input from photographers, designers, and visual storytellers: enhanced photorealism with natural lighting and accurate skin tones, reliable in-image text generation for infographics and diagrams, and rich scene generation for surreal and cinematic compositions. Microsoft positions MAI-Image-2 as reducing post-production work for creative workers by improving output quality. (Microsoft)

Google’s Stitch adds voice input for end-to-end “vibe design”

Google updated Stitch, its AI-powered design tool, positioning it as a full AI-native canvas that converts natural language prompts into high-fidelity UI designs. The platform introduces what Google calls “vibe design,” a workflow where designers describe business objectives and user emotions rather than starting with wireframes, then explore multiple directions in parallel using a new reasoning design agent that tracks project evolution. The updated canvas accepts images, text, and code as context, automatically generates interactive prototypes from static designs, and includes voice input for design iteration and critique. Google added DESIGN.md, a markdown format for design systems that integrates with other tools, and exposed Stitch via MCP server and SDK so developers can embed its capabilities into existing workflows. (Google)

Operate Claude Code remotely using Telegram and Discord

Anthropic released Claude Code Channels, a research-preview feature that routes Telegram and Discord messages into a running Claude Code session on a developer’s local machine. The system uses MCP-based plugins to push external events—chat messages, CI/CD webhooks, monitoring alerts—into an active session where Claude processes them and sends responses back through the same messaging platform. MacStories testing confirmed iOS builds, CLI operations, and audio transcription all worked from an iPhone on launch night, though the permission system creates friction: any operation requiring approval pauses the session until someone physically taps “allow” in Terminal, pushing most users toward the --dangerously-skip-permissions flag. Enterprise organizations have Channels disabled by default and must opt in through admin settings, while the plugin ecosystem remains limited to Anthropic’s allowlist during preview — no Slack, WhatsApp, or Teams yet. The release comes two months after OpenClaw creator Peter Steinberger joined OpenAI, effectively giving Anthropic a first-party answer to the viral open-source tool that demonstrated remote AI agent control but initially carried security risks. (Implicator)

Anthropic and the US Defense Department continue to spar over Claude

Anthropic filed a court response Friday asserting it cannot manipulate Claude once deployed by the US military, directly contradicting Trump administration accusations that the company could tamper with AI tools during active warfare. The company’s head of public sector stated Anthropic has no “back door” or remote kill switch, cannot access military systems to modify models, and would require both government and Amazon Web Services approval for any updates. The Pentagon designated Anthropic a supply-chain risk this month, blocking the Department of Defense and federal contractors from using Claude, claiming the company could disrupt military operations by cutting access or pushing harmful updates if it disapproves of certain uses. Anthropic filed two lawsuits challenging the ban’s constitutionality and proposed contract language guaranteeing it would not veto military tactical decisions or operational use of the model, though negotiations broke down before agreement. A federal court hearing scheduled for March 24 could determine whether the ban is temporarily reversed, but customers have already begun canceling deals. The dispute reflects fundamental disagreements over whether private AI companies should retain any leverage over government military deployments. (Wired)

Want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

Last week, Andrew Ng talked about job insecurity due to AI advancements and geopolitical uncertainties, emphasizing the importance of community and skills as stable foundations for the future.

“Amid the frenetic pace of AI advancements and multiple sources of geopolitical uncertainty, the future feels less certain at this moment than at any other I recall. In moments like this, I think about what we can build to take advantage of the exciting possibilities ahead. But I also think about what is stable that I can count on, such as community and skills.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

- Iran struck AWS facilities in Bahrain and the UAE, threatening data centers throughout the Persian Gulf region.

- Alibaba’s Qwen3.5, an open-weights MoE model, outperformed bigger models and led vision benchmarks, with sizes starting from less than 1B parameters.

- DeepSeek made its upcoming 4.0 model available for performance testing to Chinese chipmakers, excluding U.S. ones, in a snub to Nvidia.

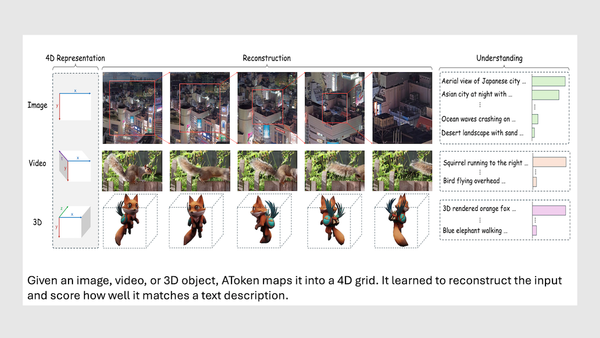

- Apple’s AToken introduced a single tokenizer for visual media, integrating a multimodal model with a unified encoder for images, videos, and 3D objects.

Attention!

A special event for our community

Andrew Ng and DeepLearning.AI are hosting AI Dev 26 × San Francisco, a two-day conference for AI developers taking place April 28–29 at Pier 48.

Join 3,000+ engineers, researchers, and builders working on modern AI systems.

The program includes top speakers, developer relations experts, and engineers from companies including Google, AMD, Oracle, Neo4j, and Snowflake (and of course DeepLearning.AI), all sharing their latest technologies and explaining how they’re building and deploying AI systems today.

At AI Dev 26, you’ll find:

- Technical talks from engineers building AI systems in production

- Hands-on workshops exploring new tools and techniques

- Live demos from startups and AI builders

- Opportunities to meet other developers and companies in the space

Get your ticket with a special discount!

Data Points is produced by human editors with AI assistance.