Mistral Measures LLM Consumption of Energy, Water, and Materials: French AI startup discloses full lifecycle consumption and emissions for Mistral Large 2

The French AI company Mistral measured the environmental impacts of its flagship large language model.

The French AI company Mistral measured the environmental impacts of its flagship large language model.

What’s new: Mistral published an environmental analysis of Mistral Large 2 (123 billion parameters) that details the model’s emission of greenhouse gases, consumption of water, and depletion of resources, taking into account all computing and manufacturing involved. The company aims to establish a standard for evaluating the environmental impacts of AI models. The study concluded that, while individual uses of the model have little impact compared to, say, using the internet, aggregate use takes a significant toll on the environment.

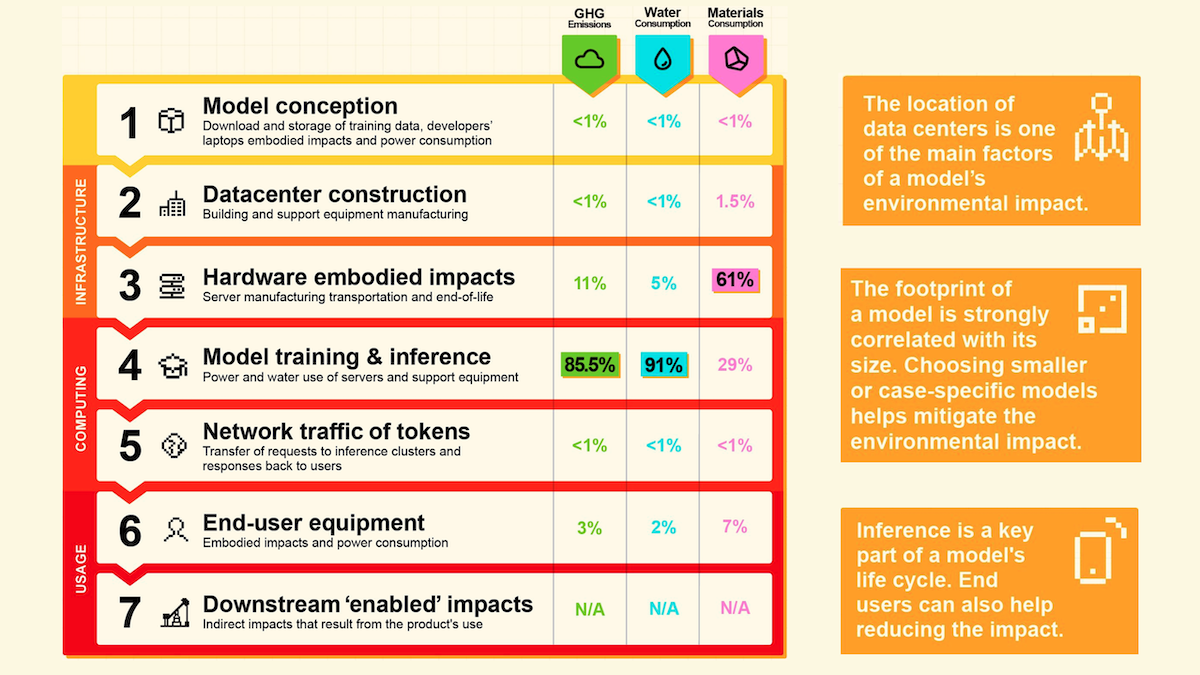

How it works: Mistral tracked the model’s operations over 18 months. It tallied impacts caused by the building of data centers, manufacturing and transporting servers, training and running the model, the user’s equipment, and indirect impacts of using the model. The analysis followed the Frugal AI methodology developed by Association Française de Normalisation, a French standards organization. Environmental consultancies contributed to the analysis, and environmental auditors peer-reviewed it.

- Training Mistral Large 2 produced 20,400 metric tons of greenhouse gases. That’s roughly equal to annual emissions from 4,400 gas-powered passenger vehicles.

- Training consumed 281,000 cubic meters of water for cooling via evaporation, roughly as much as the average U.S. family of four would consume in 500 years. (1.5 cubic meters per day x 365 days x 500 years.)

- Training and inference accounted for 85.5 percent of the model’s greenhouse-gas emissions, 91 percent of its water consumption, and 29 percent of materials consumption including energy infrastructure.

- Manufacturing, transporting, and decommissioning servers accounted for 11 percent of greenhouse gas emissions, 5 percent of water consumption, and 61 percent of overall materials consumed.

- Network traffic came to less than 1 percent of each of the three measures.

- The average prompt and response (400 tokens or a page of text) emitted 1.14 grams of greenhouse gases, about the amount produced by watching a YouTube clip (10 seconds in the U.S. or 55 seconds in France where low-emissions nuclear energy is more widely available), and consumed 45 milliliters or 3 tablespoons of water. The total materials consumption was roughly equivalent to manufacturing a 2 Euro coin.

Yes, but: Mistral acknowledged a few shortcomings of the study. It struggled to calculate some impacts due to the lack of data and established standards. For instance, a reliable assessment of the environmental impact of GPUs is not available.

Behind the news: Mistral’s report follows a string of studies that assess AI’s environmental impact.

- While AI is likely to consume increasing amounts of energy, it could also produce huge energy savings in coming years, according to a report by the International Energy Agency, which advises 44 countries on energy policy.

- A 2023 analysis by University of California and University of Texas quantified GPT-3-175B’s consumption of water. The conclusions of that work align with those of Mistral’s analysis.

- A 2021 paper identified ways to make AI models up to a thousand-fold more energy-efficient by streamlining architectures, upgrading hardware, and boosting the energy efficiency of data centers.

Why it matters: AI consumes enormous amounts of energy and water, and finding efficient ways to train and run models is critical to ensure that the technology can benefit large numbers of people. Mistral’s approach provides a standardized approach to assessing the environmental impacts. If it’s widely adopted, it could help researchers, businesses, and users compare different models, work toward more environmentally friendly AI, and potentially reduce overall impacts.

We’re thinking: Data centers and cloud computing are responsible for 1 percent of the world’s energy-related greenhouse gas emissions, according to the International Energy Agency. That’s a drop in the bucket compared to agriculture, construction, or transportation. Nonetheless, having a clear picture of AI’s consumption of resources can help us manage them more effectively as demand rises. It's heartening that major AI companies are committed to using and developing sustainable energy sources and using them efficiently, and the environmental footprint of new AI models and processors is falling steadily.