Anthropic, U.S. square off over AI safeguards: Mercury 2, an ultra-fast diffusion language model

Remote Control, a tool to vibe-code from your phone. Anthropic’s charge that DeepSeek and others distilled its models. Qwen 3.5’s suite of medium MoE models. Protenix-v1, Bytedance’s open-source take on AlphaFold 3.

In today’s edition of Data Points, you’ll learn more about:

- Remote Control, a tool to vibe-code from your phone

- Anthropic’s charge that DeepSeek and others distilled its models

- Qwen 3.5’s suite of medium MoE models

- Protenix-v1, Bytedance’s open-source take on AlphaFold 3

But first:

Anthropic faces an ultimatum from the U.S. government

U.S. Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei a Friday deadline to grant the military unrestricted access to Claude, according to sources cited by Axios. The Department of Defense threatened to invoke the Defense Production Act—a Cold War-era law granting the government broad authority over private industrial capacity—or designate Anthropic as a “supply chain risk” if the company refuses. Meanwhile, Amodei has said he will not allow Anthropic’s technology to be used for mass surveillance or automated warfare. The ultimatum represents an escalation in government pressure on AI companies to provide direct access to their systems, setting a precedent that could force other developers to compromise their safety policies or operational independence. (Axios and Reuters)

Mercury 2, a diffusion reasoning model, has breakthrough speed

Inception Labs launched Mercury 2, a language model using parallel diffusion-based generation instead of autoregressive decoding to achieve over five times faster inference than traditional LLMs. The model generates 1,009 tokens per second on NVIDIA Blackwell GPUs, priced at 0.25 dollars per million input tokens and 0.75 dollars per million output tokens, with 128,000 token context window and native tool use. Mercury 2 uses parallel refinement to produce multiple tokens simultaneously over a small number of steps rather than generating sequentially left-to-right. The model targets cases where latency compounds across multiple inference calls (including agentic loops, real-time voice interfaces, code autocomplete, and retrieval pipelines). Here, cutting per-call latency increases how many reasoning steps become economically viable within overall time and response constraints. (Inception Labs)

Claude Max subscribers get mobile control of Claude Code

Anthropic released Remote Control, allowing Claude Max subscribers ($100-$200 monthly) to control Claude Code sessions from iPhone and Android smartphones while the agent continues running on their desktop machines. The feature works by establishing a secure bridge where users run `claude remote-control` in their terminal, scan a QR code, and maintain full access to their local filesystem and Model Context Protocol servers from their mobile device. Remote Control will expand to Claude Pro ($20 monthly) subscribers soon but remains unavailable for Team or Enterprise plans during this preview phase. The launch reinforces Claude Code’s popularity, given it now has 29 million daily installs in Visual Studio Code, and analysis suggest the agent now authors four percent of all public GitHub commits worldwide. (VentureBeat)

Anthropic accuses rivals of unauthorized distillation of its models

Anthropic detected three Chinese AI labs (DeepSeek, Moonshot, and MiniMax) conducting large-scale distillation attacks that generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts. The campaigns violated Anthropic’s terms of service and regional access restrictions, using commercial proxy services and “hydra cluster” architectures to evade detection in order to mimic Claude’s reasoning, tool use, and coding capabilities. DeepSeek’s operation included prompts designed to generate chain-of-thought training data and censorship-safe alternatives to politically sensitive queries, while MiniMax’s 13 million exchange campaign pivoted within 24 hours when Anthropic released a new model. Anthropic argues these attacks undermine US export controls by allowing foreign labs to acquire advanced AI capabilities stripped of safety guardrails, which can then feed into military, intelligence, and surveillance systems. (Anthropic)

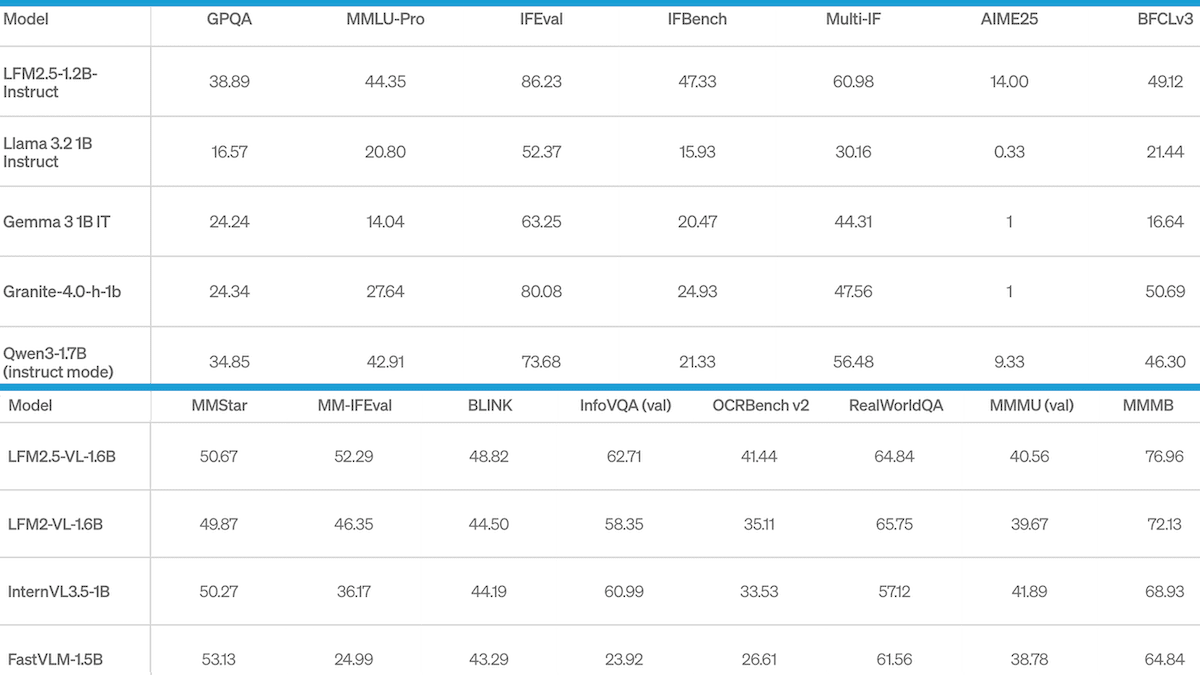

Qwen fleshes out 3.5 family with efficient mid-sized offerings

Alibaba’s Qwen team released the Qwen 3.5 Medium Model Series, featuring models from 27 billion to 122 billion total parameters that use a Mixture-of-Experts architecture to activate only a subset during inference. The standout Qwen3.5-35B-A3B activates just 3 billion parameters per inference pass yet outperforms the previous generation Qwen3-235B-A22B model that activated 22 billion parameters, over a 7x improvement in reasoning density. The architecture combines Gated Delta Networks with standard attention blocks to enable faster decoding and lower memory usage. Qwen3.5-Flash, the production-ready hosted version, offers a 1 million token context window by default and includes native function calling for API integration. The efficiency gains mean developers can run frontier-level reasoning on standard infrastructure rather than requiring massive compute clusters. (MarkTechPost)

ByteDance opens up AlphaFold3-level protein prediction

ByteDance released Protenix-v1, a free and open-source tool that matches the performance of AlphaFold3 — one of the most advanced AI models for predicting how proteins fold — but with more transparency about its design and training, and all code and trained weights freely available for anyone to use and modify. Protenix-1 can predict the three-dimensional shapes of proteins, DNA, RNA, and drug molecules. Protenix-v1 gets more accurate predictions when given more time to sample different possibilities, letting researchers choose between speed and accuracy based on their needs. The team also released PXMeter, a benchmarking toolkit that compares different structure prediction models using over 6,000 carefully curated molecular structures. The release includes additional tools for designing new proteins that bind to targets, performing molecular docking simulations, and lightweight versions that run faster on limited computing resources. (MarkTechPost)

Still want to know more about what matters in AI right now?

Read the latest issue of The Batch for in-depth analysis of news and research.

Last week, Andrew Ng talked about AI’s potential to create new job opportunities, the moral responsibility to support those affected by AI-driven changes, and the growing demand for digital services and custom software development.

“The evolution of AI and software continues to accelerate, and the set of opportunities for things we can build still grows every day. I’ve stopped writing code by hand. More controversially, I’ve long stopped reading generated code. I realize I’m in the minority here, but I feel like I can get built most of what I want without having to look directly at coding syntax, and I operate at a higher level of abstraction using coding agents to manipulate code for me.”

Read Andrew’s letter here.

Other top AI news and research stories covered in depth:

- GLM-5 Scaled Up as Z.ai’s updated model achieved the top open-weights Intelligence Index score, setting a new benchmark in AI capabilities.

- Big AI Spent Big On Lobbying with Meta, Amazon, Microsoft, Google, and Nvidia investing millions to influence government policies and regulations.

- Faster Reasoning at the Edge Was Achieved by Liquid AI’s innovative model that combined attention with convolutional layers for enhanced efficiency.

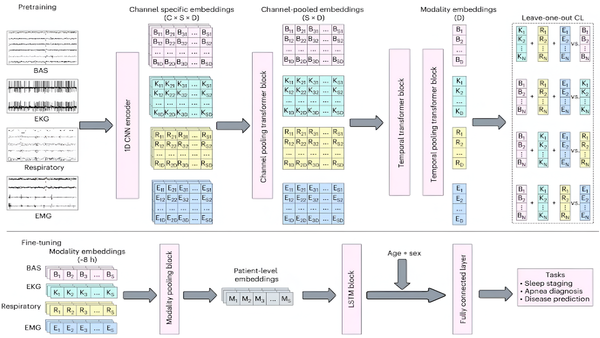

- Sleep Signals Predicted Illness through SleepFM, which detected signs of neurological disorders years before symptoms manifested, offering a breakthrough in preventative healthcare.